Most teams already have the answer to their copy problems sitting in the queue. Use AI conversation signals to pull the exact words customers use, map them to the right screens, and run small microcopy experiments that remove friction fast. You will see it in the numbers, and you can prove it with quotes.

We are not guessing here. We are translating what people actually said into clear, testable copy. Start with AI conversation signals, like raw tags, canonical tags, drivers, sentiment, churn risk, and Customer Effort. Then turn those patterns into hypotheses and measure change. That loop gets you out of opinion wars and into repeatable wins.

Key Takeaways:

- Stop debating adjectives and start testing phrases customers already use

- Use drivers, sentiment, and effort to target the right screens and states

- Instrument microcopy like any other UI element so exposure is traceable

- Validate tests with quotes linked to the exact tickets behind a metric

- Size impact by segment before rollout, then expand safely

- Measure lift with conversation metrics, not just click rates

Stop Guessing Copy: Turn AI Conversation Signals Into Testable Microcopy

You stop guessing by turning AI conversation signals into a short list of phrases to test, then measuring outcomes. Pull verbatim quotes from clusters that share the same raw tags and drivers. Map each confusion to a microcopy slot and compare before and after using sentiment and Customer Effort. That tight loop reduces risk and speeds decisions.

Find The Language Customers Already Use

Start in your analysis layer where AI has already labeled 100 percent of conversations. Filter by a driver you care about, like Billing or Account Access, then open a few tickets in Conversation Insights. You will spot nouns and verbs customers repeat when they fail. That pattern is your copy palette.

We have seen this save days. Instead of brainstorming synonyms in a doc, you reuse language that already matches the customer’s mental model. Pull 10 to 15 phrases across segments and time windows so you do not anchor on one loud case. If you need a gut check, compare the frequency of the top three candidates in recent tickets. A little rigor here kills bias.

You can sharpen this by reading up on why tiny phrasing shifts change behavior. A good primer is how tiny UX copy tweaks make a big impact. It pairs well with your ticket evidence because it explains the mechanics you are about to test.

Which Confusion Points Become Copy Candidates?

Aim where friction spikes. Look for negative sentiment and high effort inside the same driver, then narrow further by product area or step. When those overlap, open representative tickets and note where confusion appears, like empty states, error boundaries, or choice overload screens.

Translate each confusion into a copy slot you can target. Label it with screen and state, and capture the current text. That creates a backlog of specific fields, not vague ideas. You can now prioritize by risk, segment, and expected reach instead of opinion. It also makes rollout safer, because you know exactly what is changing and where.

As an interjection, pause once and ask, does this slot even need words, or would a control or hint reduce effort faster? If the answer is still copy, proceed.

What Quotes Are Representative Without Bias?

Do a quick audit. From your filtered set, pick quotes that match the median case, not just the most dramatic complaint. Aim for three to five examples that use different words to describe the same problem. Save ticket IDs and segments for traceability.

Stakeholders will ask for proof. They should. These citations keep you out of the anecdote trap and let you defend your test without sounding defensive. If you need a second opinion on tone and style, skim a primer on conversational design to refine phrasing without drifting from the source language.

Map And Segment: Turn AI Conversation Signals Into Driver Context

You make conversation signals useful by mapping phrases to screens and user states, then segmenting exposure using drivers, sentiment, and account tier. This link between insight and touchpoint lets you run safer tests and attribute lift cleanly. Without it, you risk noisy data and overgeneralized conclusions.

Map Phrases To Screens, States, And Moments

Create a simple mapping table. For each chosen phrase, log the UI surface where it appears, the user state, and the task step. Add the canonical tag and driver so your work lines up with leadership language. Include an owner, like PM or UX writer, and any dependency that could block a change.

This sounds basic. It is. It also prevents the classic mistake of changing copy without clear context. When you eventually share results, the map shows exactly where the fix lives and why it matters to a specific driver. That is what turns a test result into a roadmap update instead of a Slack thread.

Lists can help you get started, then you can refine:

- Copy slot name, screen, and state

- Driver and canonical tag alignment

- Owner and dependency fields

How Do You Instrument Microcopy Touchpoints?

Instrument copy like any other UI element. Add stable IDs, capture exposure events, and log the variant key. Record context fields you will want later, like driver, plan tier, and product area, so you can pivot results. Keep payloads lean and consistent so analysis is fast and repeatable.

If you cannot prove who saw what, you cannot trust the lift you think you found. That is where teams stumble. They change words, see a small bump, and cannot tie it to the right audience or moment. You do not need a fancy framework here. You need consistent event names, variant labels, and a clear join key to your analysis layer.

Segment By Driver, Sentiment, And Account Tier

Segment before rollout. Start with a smaller, higher risk cohort, like new accounts in onboarding who show recent negative sentiment within a driver. If the copy helps there, expand cleanly to adjacent segments. If it does not, you have minimized blast radius and learned something specific.

This path also keeps enterprise accounts safe from swings you did not intend. It is tempting to roll a “harmless” copy change to all users. Resist that. The whole point of using AI conversation signals is precision. Use it to learn where words carry extra load and where they do not.

The Hidden Cost Of Opinion-First Microcopy On CX Metrics

Opinion-first copy costs time, credibility, and missed revenue because it is untraceable and biased. Without coverage and driver context, you chase the wrong fixes. With conversation metrics and quotes, you tie changes to outcomes and stop wasting cycles. That discipline pays off faster than you think.

Put Numbers To The Friction You Can Feel

Let’s pretend 1,000 users hit a billing error this month. The message is vague, retries fail, and tickets spike. Use Customer Effort and sentiment to size the damage, then connect it to conversion outcomes. A one point sentiment drop in this driver can correlate with fewer successful retries and more tickets, which drags revenue and morale.

Numbers end debates. Opinion invites delay. If you do not have baseline metrics, run a quick backfill using last month’s tickets. Then you can say, “This copy affects X percent of users and increases effort by Y,” instead of, “It feels off.” People move faster when the cost is visible.

Why Sampling Tickets Misses The Real Problem

Sampling looks efficient. It is not. Ten percent of tickets will miss the exact wording people paste into your error form or chat, which is often the crux of the fix. Full coverage shows the real distribution of phrases and tones across cohorts. That is how you pick copy that helps most users, not just the last person you read.

If you need a refresher on the behavioral side of trust and recovery states, skim this piece on designing AI UIs people actually trust. It underscores why vague errors and unclear next steps raise effort and erode trust.

What Happens When Copy Fixes Are Not Traceable?

Reviews stall. Stakeholders ask where findings came from, and without a path back to quotes, your claim looks soft. That invites rework and a loop of “redo the test with better data.” Worse, you risk shipping a variant that seems to win on clicks but raises effort in support channels.

Traceability shortens these loops. When you can pivot from a chart to the exact tickets behind it, people stop arguing about the premise and start deciding on the plan. It prevents the failure of well-meant fixes that are not grounded in real language.

What It Feels Like When Microcopy Misses The Moment

When copy drifts from the language customers use, teams feel it as friction, rework, and risk. PMs hesitate to ship. UX writers fight taste debates. Analysts know the pattern but cannot land the point. Grounding decisions in drivers, quotes, and effort metrics changes that dynamic fast.

For PMs: Priorities Without Proof Invite Rework

You are juggling a crowded roadmap and a thread about “just tweak the error text.” Easy to say. Hard to defend. Without driver-level metrics and quotes, every copy decision lands on your shoulders and gets second guessed. That risk slows you down, and you know it.

Tie the request to evidence. “Within the Billing driver, new users show negative sentiment and high effort on payment failures. Here are three quotes. Here is the current text. Here is the proposed change.” Now you are not arguing taste. You are reducing risk with a small, testable move.

For UX Writers: Copy Debates Without Evidence Stall Work

You write the message three ways. Someone still says, “it feels off.” That is frustrating rework. Bring quotes that show the exact words customers use when they fail, plus how effort or sentiment shifted around the same moment. The tone debate deflates when the room sees the language in context.

This is not about winning a style fight. It is about reducing the cost of iteration. When you anchor on drivers and quotes, you cannot miss the mark by much. And when you do, you learn why in hours, not weeks.

For CX Analysts: You See The Pattern But Cannot Prove It

Your chart shows a spike after a policy change. You even know the workflow that causes it. But without a path back to the words customers used, the story stalls. Connect the grouped view to Conversation Insights and pull three median quotes. Then show sentiment and effort before and after the copy change. You will stop losing time to “are we sure” and start closing the loop.

The New Way To Turn AI Conversation Signals Into A Repeatable Experiment Pipeline

The new way is simple and disciplined. Extract phrases from full coverage data, map them to specific screens, write a clear hypothesis, then measure outcomes with conversation metrics and quotes. Do this in tight loops, starting with higher risk cohorts, and expand once it works. That is how you scale confidence.

Discovery: Extract, Cluster, And Select Verbatim Candidates

Start with complete data. Filter conversations by negative sentiment or high effort, grouped by driver and canonical tag. Open tickets in Conversation Insights and copy phrases customers repeat when they fail. Build a shortlist tied to specific UI touchpoints.

We are not ideating here. We are harvesting. That difference matters. You are choosing words that already align with how people think and speak. It lowers the chance of missing the point and speeds buy in during reviews because the phrases come with receipts.

To keep your list tight, pick candidates that:

- Appear across segments and time windows

- Map cleanly to a copy slot you control

- Tie back to a measurable outcome

Experiment Design: Hypotheses, Segments, And Metrics

Write a tight hypothesis that links phrase to behavior. “Replacing ‘authentication failed’ with ‘We could not verify your code’ will increase retry success among new users during onboarding.” Define exposure rules and segments. Set success metrics, like Customer Effort change, sentiment delta, first contact resolution, or conversion.

Control rollout with your feature flag system and plan sample sizes before launch. Your goal is not a grand reveal. It is a quick, credible read you can explain in one slide. That is what moves work forward without drama.

Here is a simple structure you can reuse:

- Hypothesis tied to a driver and behavior

- Exposure and segment rules

- Success metrics and sample size plan

- Risk guardrails and expansion criteria

Measurement And Iteration: Tie Results To Conversation Evidence

Run the test, attribute exposure, and track outcomes. Afterward, pivot conversation metrics by driver and segment to see if the effect holds in the channel where confusion originated. Save representative quotes that capture the experience before and after. If lift is real, publish the change, update your canonical tags if needed, and log two sentences on what you learned.

We have found that small notes here prevent the same debate from reappearing next quarter. It also helps new teammates understand why the words look the way they do.

Ready to see this approach in your own data? Want to cut the guesswork on which phrases to test? Learn More

How Revelir AI Turns AI Conversation Signals Into Experiment-Ready Microcopy

Revelir AI makes this process practical by turning every support conversation into structured, evidence backed signals you can trust. It analyzes 100 percent of tickets, rolls raw tags into canonical categories and drivers, and links every aggregate back to the exact quotes behind it. That traceability is what speeds demos, meetings, and rollout.

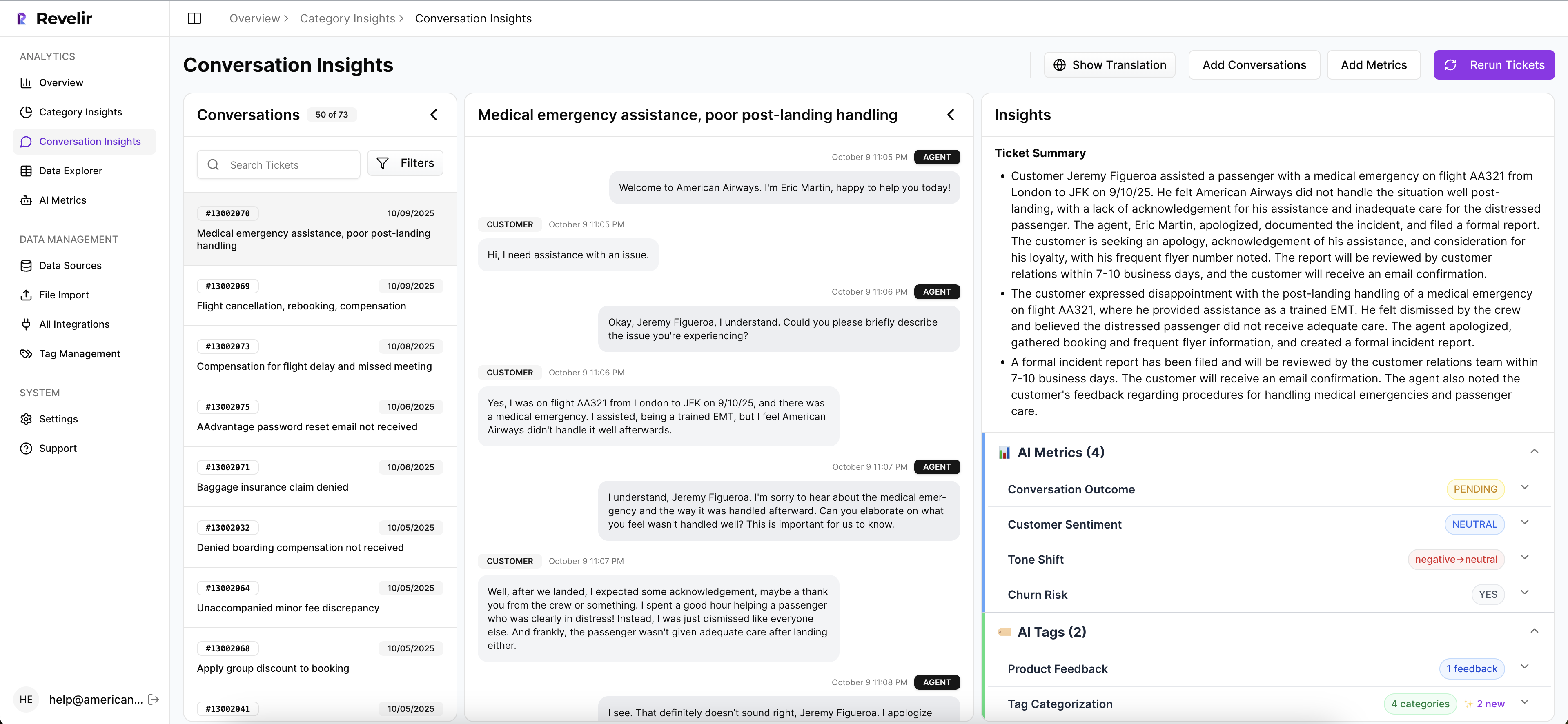

Evidence Traceability From Metric To Quote

Every chart, count, and grouped view links to the tickets that created it. You can click a segment like “negative sentiment in Billing,” open Conversation Insights, read the transcript, and copy representative quotes. That path lets you defend a test with the words customers actually used, not a paraphrase.

In practice, this kills the “show me an example” stall that derails planning. When a PM or leader asks for proof, you have it. The examples match the insight, and the metrics hold up when you sample tickets. That is the line between a hunch and a plan.

- Bold claim, then proof with quotes

- Aggregate, then drill to transcripts

- Insight, then example that matches it

Driver Level Analysis And Segmented Rollout Planning

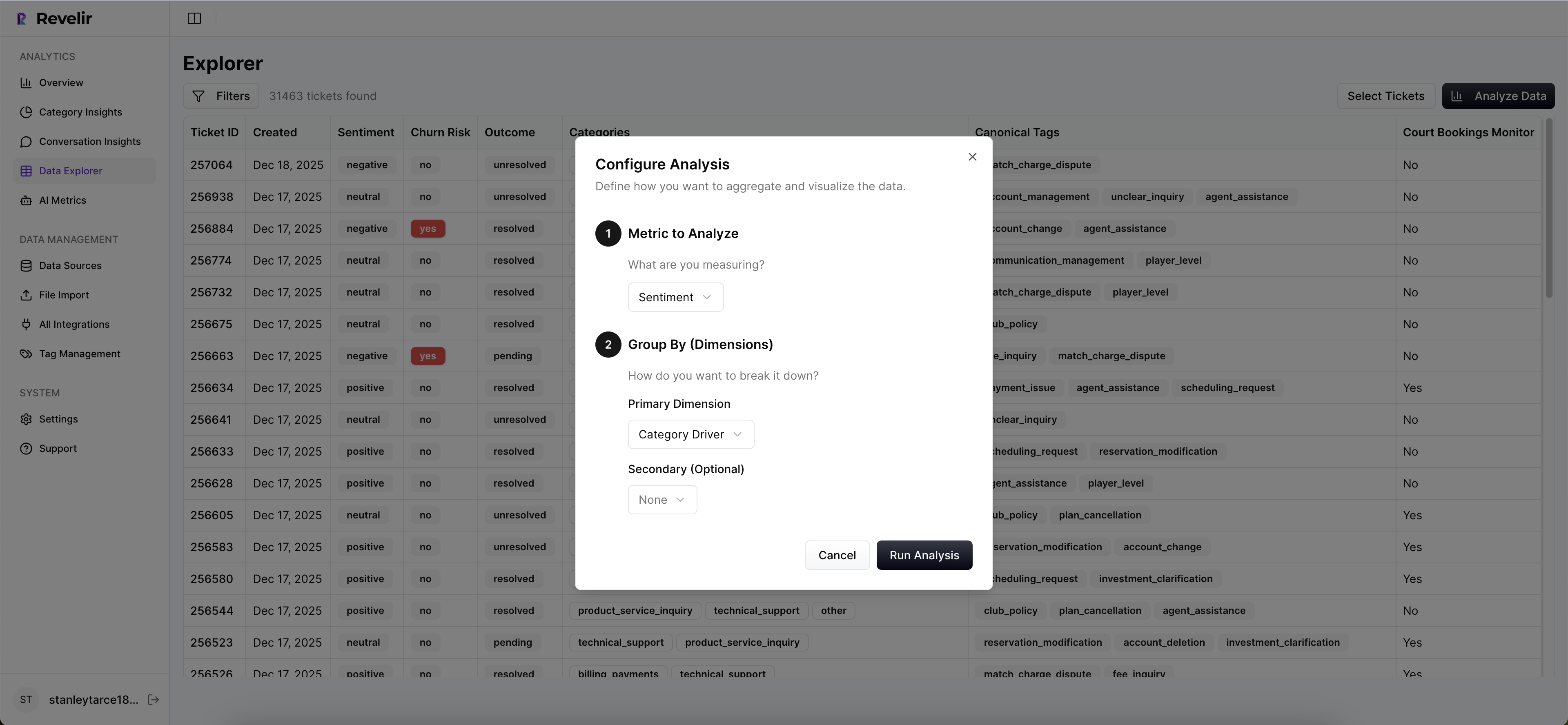

Analyze Data shows where high effort and negative sentiment concentrate, grouped by driver or canonical tag. Add filters for plan tier or region. You will see which cohorts carry risk and how big the audience is, so you can design narrow rollouts before expanding. That aligns to how leadership already views the business.

This matters when stakes are high. You can say, “Among new Enterprise accounts, Account Access shows elevated effort on login loops. Here are three quotes and the current message.” Now your rollout plan is focused, and the exposure math is clear.

From Insights To Execution With API And Saved Views

You work in Revelir to find the phrases and plan the test, then operate variants in your product stack. Create saved views that define the driver, tags, and segments you care about, and reuse them as baselines. When you need to join exposure logs or share results downstream, export metrics via API so reporting stays consistent.

This trims manual stitching. You move from insight to setup without losing context, and you can repeat the loop in days, not months.

See what this looks like with your tickets and drivers. 3x faster from insight to approved test is common when the proof line is built in. See how Revelir AI works

To recap the specific capabilities you will lean on:

- Evidence backed drill downs, from grouped tables to full transcripts

- Full coverage processing across 100 percent of tickets

- Hybrid tagging with raw tags, canonical tags, and drivers for clarity

- Analyze Data for grouped insight and segment sizing

- API export and saved views for repeatable reporting

Conclusion

Copy debates are cheap. Friction is not. When you base microcopy on AI conversation signals, map phrases to moments, and prove changes with metrics and quotes, you reduce risk and lift the right outcomes. Start narrow, learn fast, and expand with confidence. Your support data already holds the words. Use them well.

Ready to run your first evidence backed copy test and tie it to real quotes? Get started with Revelir AI (Webflow)

Frequently Asked Questions

How do I identify the top drivers of negative sentiment?

To identify the top drivers of negative sentiment using Revelir AI, start by accessing the Data Explorer. 1) Apply filters for sentiment, selecting 'Negative.' 2) Click on 'Analyze Data' and choose 'Sentiment' as the metric to analyze. 3) Group your results by 'Category Driver' to see which issues are contributing most to negative sentiment. This approach allows you to pinpoint specific areas for improvement, ensuring your team can address the root causes effectively.

What if I want to validate AI outputs with real conversations?

You can validate AI outputs by using the Conversation Insights feature in Revelir AI. 1) After running your analysis, click on any metric number (like the count of negative sentiment tickets). 2) This will take you to a filtered view of Conversation Insights, where you can see the exact conversations behind the metrics. 3) Review the transcripts and AI summaries to ensure the insights align with the actual customer feedback. This process helps confirm that the AI-generated metrics make sense in context.

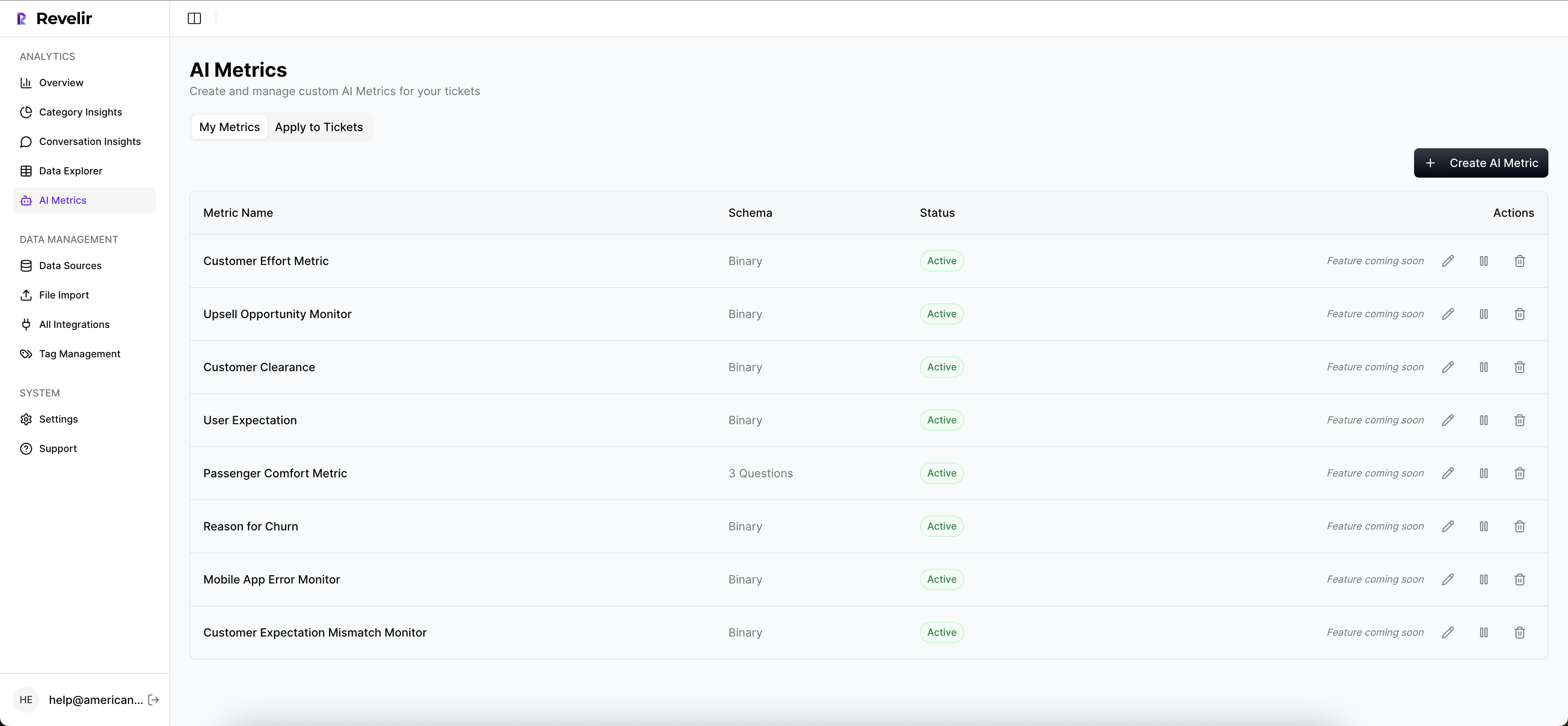

Can I create custom AI metrics for specific needs?

Yes, you can create custom AI metrics in Revelir AI to match your business language. 1) Define the specific questions you want the AI to answer, such as 'Reason for Churn' or 'Upsell Opportunity.' 2) Specify the possible values for each metric (like Yes/No or High/Medium/Low). 3) Once set up, Revelir will apply these custom metrics to your conversations, providing tailored insights that are directly relevant to your business goals.

When should I use the Analyze Data feature?

You should use the Analyze Data feature in Revelir AI whenever you need to understand broader patterns in your support tickets. 1) It's particularly useful for answering questions like 'What’s driving churn risk?' or 'Which issues are affecting high-value customers?' 2) By grouping data by dimensions like 'Canonical Tag' or 'Driver,' you can see aggregated results that highlight trends. 3) This feature helps you make informed decisions based on comprehensive data rather than relying on anecdotal evidence.

Why does full coverage processing matter?

Full coverage processing is crucial because it ensures that Revelir AI analyzes 100% of your support conversations, eliminating the biases that come from sampling. 1) This means you won't miss critical signals, such as early indicators of churn risk or emerging customer frustrations. 2) By processing every ticket, you gain a complete view of customer sentiment and issues, allowing for more accurate insights. 3) Ultimately, this leads to better decision-making and prioritization of fixes based on real data.