Traditional surveys feel safe. They fit on a slide, they trend over time, and they give everyone a number to point at. But the limitations of traditional customer satisfaction surveys show up the moment you ask why a number moved. You get silence. Or worse, guesses dressed up as certainty.

It’s usually the same cycle. Score dips, someone pulls three verbatims, and the room builds a story. Sounds confident. It isn’t. You’re still missing the why, the who, and the cost. Support conversations already hold that detail. The problem is they’re trapped in free text where nobody’s checking at scale or with any traceability.

Key Takeaways:

- Scores alone don’t explain why issues happen, who they affect, or what to fix first

- Sampling invites bias and misses quiet but costly patterns hidden in small cohorts

- Nonresponse and acquiescence bias distort survey signals you rely on

- Full conversation coverage replaces guesswork with traceable, auditable evidence

- Drivers plus quotes win debates in product and exec reviews

- The new standard is evidence-backed metrics from 100% of conversations

Polarizing Insight

Most companies over-trust survey scores because they’re easy to graph, but the real CX truth lives in support conversations. Scores tell you something moved. They don’t tell you why it moved or what to fix first. When you measure the conversations, you see the drivers, the segments affected, and the real cost.

Surveys Miss The Why

Surveys compress complex narratives into a single score, which hides the root cause behind customer frustration. You see a dip, but not whether onboarding confusion or a billing bug is driving it. That gap forces teams to guess, and those guesses drift into wrong fixes that waste time and money.

Teams also overfit to what the survey asked, not what the customer actually felt or needed. If your questions miss a key friction point, that friction never makes it into your dashboard. Same thing with timing. Ask too late, and recall blurs the details. Ask too soon, and you capture venting, not insight.

The conversation transcript keeps the context intact. You can hear the confusion in a step-by-step exchange. You can spot the moment trust breaks. If you can’t see that, you’re managing by shadows. That’s the uncomfortable truth here.

Sampling Creates False Certainty

Sampling feels scientific, but in practice it often amplifies bias and misses rare but costly patterns. A 10 percent slice will rarely catch churn-risk clusters that show up in small cohorts or edge-case workflows. Those clusters matter. They’re where you lose high-value customers quietly.

Let’s pretend you read 50 tickets out of 1,000. That’s a couple of hours, maybe. You’ll grab the loudest stories and build a tidy narrative. It feels rigorous because you did the work. It’s not. You still don’t know what you missed, which is the risk you can’t defend later.

When you present that story, leaders will poke holes. What segment? Which plan? How many affected? Without coverage and traceability back to exact quotes, you’re guessing. And guessing gets exposed the minute a different anecdote walks into the room.

Scores Without Evidence Stall Decisions

A score without a driver is a stalled project. You can’t size the problem, and you can’t prioritize the fix. That’s why backlog fights drag on. Product hears “customers are unhappy,” but not “new users on plan X fail step two 38 percent of the time.”

In reviews, confidence is what moves resources. Evidence builds confidence. If you can click from a chart to the ticket and show the line where the customer got stuck, the debate ends. You stop arguing about whether a problem is real and start deciding how to fix it.

It’s usually that simple. Evidence wins. Numbers backed by quotes get funded. Numbers with no trail get punted to next quarter.

Reframe

The real problem isn’t low CSAT, it’s that your measurement system can’t explain the why behind the number at full coverage. You don’t have a data layer for conversations that is both complete and auditable. Once you fix that, scores become context, not the source of truth.

The Real Unit Is The Conversation

The actionable unit in CX isn’t the survey response, it’s the conversation. That’s where intent, confusion, and workarounds show up in detail. A single exchange can expose five broken steps and two product gaps. A score can’t carry that load.

Think about how your team actually learns. It’s when you read the transcript and feel the friction. You mark the steps, you tag the theme, you collect the quote. Then you walk into the meeting with proof. That is how decisions get made. Not by hand-waving a trend line.

So the reframe is simple. Treat conversations as data, not anecdotes. Turn them into structured, traceable metrics you can explore, then pull the receipts in one click. Do that, and the rest falls into place.

Coverage Beats Precision Guessing

You don’t need perfect models to make better calls. You need coverage that removes blind spots and bias from a tiny slice. Most teams obsess over model precision, then run it on 5 percent of the data. That’s upside down.

Full coverage, even with simple classifiers, will surface patterns you’d never see in a sample. You’ll find small but critical segments where effort is high and sentiment is sour. You’ll catch word patterns that point to a bug in one flow. Without coverage, you miss it. And you pay for that miss in churn and escalations.

If you must choose, choose coverage first. Then refine. That order saves real money.

Traceability Builds Trust

Leaders don’t just want numbers. They want to know those numbers stand up when challenged. That means you need to link every metric to the exact tickets and quotes behind it. No traceability, no trust. It’s that stark.

When someone asks, “Show me,” you should be two clicks from the sentence that proves the point. If you can’t, the room stalls. Worse, your future recommendations lose weight. Build a measurement layer where traceability is default, and you’ll feel the shift in the first review.

You’ll also feel your own confidence change. It’s easier to push for a fix when you know the evidence holds.

Rational Drowning

Relying on surveys and samples has a measurable cost in time, money, and risk. You burn hours reading tickets, you fund the wrong fixes, and you miss early churn signals hiding in small cohorts. These aren’t abstract issues, they show up on your P&L.

The Time Cost You Never Book

Manual review scales terribly. If your team handles 1,000 tickets a month and you sample 10 percent at three minutes each, that’s five hours for a partial view. Add coordination, and you’re at a full workday to still be wrong. Multiply by product areas, and it balloons.

Industry research points to systematized analytics to make CX insights repeatable, not ad hoc reading sessions. McKinsey describes this shift toward analytics-driven CX as a core operating model in their view on customer experience analytics, which stresses consistent, scalable measurement over gut checks. See the perspective in the McKinsey customer experience analytics overview.

It sounds small, but a few hours a week turns into a headcount over a year. That’s the cost hiding in plain sight.

The Money You Waste On The Wrong Fix

When the why is missing, teams fund guesses. A UI polish sprint instead of fixing a broken billing flow. A training deck instead of removing a confusing step. Each wrong fix carries engineering time, opportunity cost, and credibility loss.

Gartner’s CX trends note that leaders are shifting budgets toward solutions that tie insights to clear action and outcomes. That shift only works when the insight layer is grounded in evidence, not vibes. You can see this move in the Gartner customer service and support trends.

Fund the wrong fix twice, and you’ll feel it in retention. Fund the right one once, and the curve bends back.

The Risk Hiding In Nonresponse Bias

Surveys rarely capture the full population. Nonresponse bias tilts the picture toward those who choose to answer, which can mask real problems. If unhappy customers don’t respond, your CSAT looks fine while churn risk climbs.

This is a known problem in research methods. Standard-setting bodies warn that response patterns can distort results unless you adjust or expand measurement. The American Association for Public Opinion Research details these risks in their Standard Definitions for response rates. Most CX teams don’t run those adjustments. They just take the number.

So you miss early warning signals in quiet segments. By the time the score moves, the issue is already expensive.

Emotion

You don’t feel the pain in a dashboard, you feel it in the week you just survived. The 7 a.m. ping about a spike. The late-night Slack thread asking for “three representative tickets” before the board call. The slow dread of walking into a meeting with a story you can’t back up.

The Weekly Fire Drill You Know Too Well

Picture Monday. Tickets spiked over the weekend. You skim 40 threads hunting for a theme. It’s messy. It’s noisy. Nobody’s checking whether this pattern holds beyond one cohort. You still need to write a neat paragraph with two quotes and a fix idea before lunch.

By Wednesday, product asks for evidence by segment. You rebuild the slice by hand. By Friday, a new anecdote shows up and the story shifts again. You’re not failing. The process is. It rewards speed over certainty and leaves you exposed when someone asks for the receipts.

Honestly, you’re not alone. Most teams run this way. It’s just not sustainable.

The Leadership Meeting Where Confidence Cracks

You present the score trend. You share the three quotes. Then comes the question you knew was coming. “How many customers is this, exactly?” The room goes quiet. You guess. Confidence cracks.

That’s the moment that hurts. Not because leaders are harsh, but because you didn’t have a way to trace the number to the list of tickets and the lines that prove it. You did the work. The system still failed you.

Fix the system, and these meetings feel different. You answer fast. You point to the exact place where the issue lives. Debate ends.

New Way

The alternative is simple to say and powerful in practice: measure 100 percent of support conversations, turn them into structured, evidence-backed metrics, and keep a live trail from every chart to the exact tickets and quotes. Scores become context. Drivers and evidence become the story.

Measure Every Conversation, Not A Sample

Start by committing to full coverage. Process every ticket so you can slice by cohort, plan, product area, and time without guessing. Coverage removes the risk of missing quiet but costly issues that never show up in thin samples.

Then standardize your view of the world. Build a hybrid taxonomy where granular signals roll up into human-friendly categories and drivers. That keeps discovery flexible while making reporting clear. It also helps you compare like with like in reviews.

Finally, insist on traceability. Every number should click into the set of tickets and the quotes that prove it. No exceptions. That’s how you build trust.

Move From Scores To Drivers

Scores are a starting point. Drivers explain the change. Tagging raw themes and rolling them into canonical categories gives you the bridge from chaos to clarity. You stop saying “sentiment is down” and start saying “new users on plan B fail step two in onboarding.”

This shift also changes how you prioritize. You can see which drivers correlate with negative sentiment, high effort, and churn risk across segments. You can decide what to fix first with confidence. That confidence spreads. Engineering commits. CX prepares. Marketing sets better expectations.

It looks obvious in hindsight. It always does.

Make Insights Auditable By Default

Build your workflow so auditability is baked in, not a scramble. When every chart links to source conversations, you stop exporting, stitching, and second-guessing. You also raise the bar for any insight you bring into the room.

To get there:

- Capture raw signals from every conversation

- Normalize them into canonical tags and drivers

- Compute core metrics like sentiment and effort

- Keep a one-click path back to the ticket and quote

- Set ownership and SLAs for closing the top drivers each cycle

Ready to see this approach in action at scale? See how Revelir AI works.

Solution

Revelir AI turns messy support conversations into clear, measurable signals with full coverage and traceability. It processes 100 percent of your tickets, applies AI metrics and hybrid tagging, and links every number to the exact tickets and quotes. That closes the gap between score watching and evidence-backed action.

Full-Coverage Processing With Evidence

Revelir AI processes all ingested tickets without manual upfront tagging, so you don’t miss patterns hiding in quiet segments. The AI Metrics Engine computes sentiment, churn risk, customer effort (where supported), and conversation outcome as structured fields you can filter and group. Evidence-backed traceability keeps a live link from aggregates to source conversations for audit-ready reviews.

This directly addresses the time and risk costs from earlier. You spend less time sampling and second-guessing, and you reduce the risk of funding the wrong fix because you can validate the why before you commit people and budget.

From Drivers To Decisions In Data Explorer

The Data Explorer gives you a pivot-table-like workspace to filter, group, sort, and inspect every ticket with columns for tags, drivers, sentiment, effort, churn risk, and custom metrics. Analyze Data runs grouped summaries by Driver, Canonical Tag, or Raw Tag and links results back to underlying tickets for fast validation.

Hybrid Tagging combines AI-generated Raw Tags with Canonical Tags you control, so discovery stays flexible while reporting stays clear. Conversation Insights and AI-Generated Summaries let you jump from a chart to the exact quote that proves the point, then back to the analysis view without losing your place.

Faster root-cause analysis. That’s what Revelir delivers. Learn More.

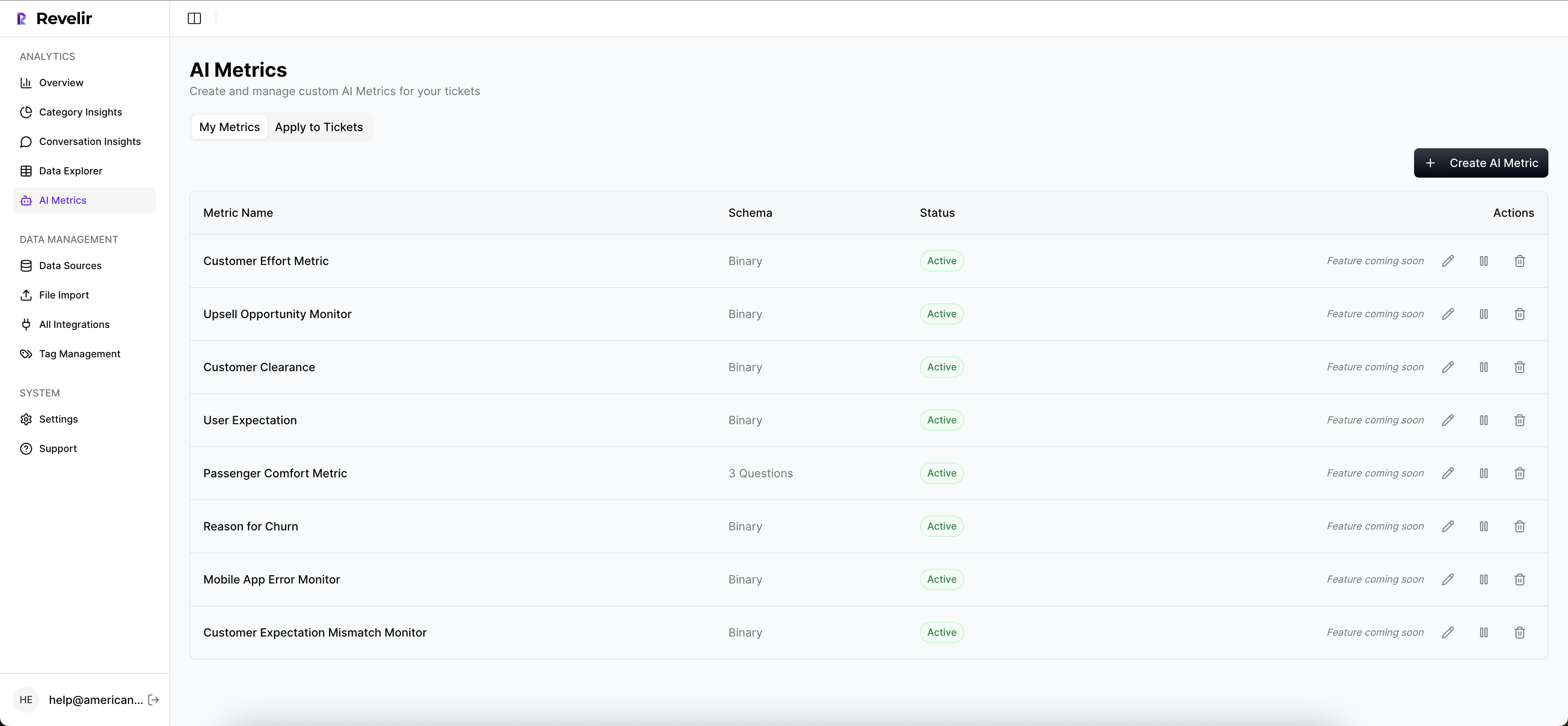

Custom Metrics In Your Language

Custom AI Metrics let you define classifiers that match your business language, like Upsell Opportunity or Reason for Churn. Those results become columns you can filter and analyze alongside sentiment and effort. Drivers roll these signals up for leadership-friendly reporting that still clicks back into the raw conversation.

You can start on top of Zendesk via a direct integration or upload a CSV to run a pilot. When you’re ready to share results beyond CX, use API Export to bring structured metrics into your BI tools. No rip and replace. No new helpdesk. Just an intelligence layer that makes conversations measurable.

Key capabilities at a glance:

- Full-Coverage Processing that eliminates sampling blind spots

- AI Metrics Engine for sentiment, churn risk, effort, and outcomes

- Hybrid Tagging with Raw and Canonical Tags mapped to Drivers

- Data Explorer and Analyze Data for fast, flexible ad hoc analysis

- Evidence-Backed Traceability with ticket-level drill-downs and quotes

Conclusion

Traditional surveys aren’t useless, they’re just incomplete on their own. Scores tell you what moved. Conversations tell you why, who, and what to fix first. When you measure 100 percent of support conversations and make every chart traceable to quotes, you replace debate with decisions.

It’s usually the shift that unlocks real speed. You stop wasting time on wrong fixes, you catch risk before it turns into churn, and your reviews get a lot calmer. If you want to operate that way, start with evidence-backed metrics from your support data, then let the scores play supporting role, not lead. Ready to make the switch with traceability built in? See how Revelir AI works.

Frequently Asked Questions

How do I analyze customer support tickets effectively?

To analyze customer support tickets effectively, start by using Revelir AI's Data Explorer. You can filter and group tickets based on various metrics like sentiment and churn risk. This allows you to identify patterns that might indicate underlying issues. Additionally, make sure to utilize the Analyze Data feature to run grouped summaries, which can help you understand the drivers behind customer feedback. Finally, ensure every metric is traceable back to the original conversations for validation.

What if I need to track specific customer issues over time?

If you want to track specific customer issues over time, you can set up custom AI Metrics in Revelir AI. This allows you to define classifiers that match your business needs, such as tracking 'Billing Confusion' or 'Onboarding Issues.' By doing this, you can filter and analyze these metrics alongside sentiment and effort, giving you a clearer view of how these issues evolve. Regularly review the trends in the Data Explorer to stay informed.

Can I integrate Revelir AI with my existing helpdesk?

Yes, Revelir AI can integrate directly with your existing helpdesk, such as Zendesk. This integration allows you to automatically ingest support tickets and their metadata without manual exports. Once connected, Revelir will continuously process new or updated tickets, ensuring you have up-to-date insights. This seamless connection helps you maintain full coverage of your customer support conversations, which is crucial for identifying trends and issues.

When should I consider using custom metrics?

You should consider using custom metrics when you have specific business objectives that standard metrics don't cover. For instance, if you want to track 'Upsell Opportunities' or 'Reasons for Churn,' custom AI Metrics in Revelir AI allow you to define these categories. This way, you can analyze them alongside other metrics like sentiment and churn risk, providing a more tailored view of your customer interactions. This approach can help you prioritize actions based on your unique business goals.

Why does traceability matter in customer support analysis?

Traceability is crucial in customer support analysis because it builds trust and credibility. With Revelir AI, every aggregate metric links directly to the source conversations and quotes. This means when stakeholders ask for proof of a trend or issue, you can quickly provide the exact tickets and quotes that support your findings. This level of transparency not only strengthens your case in discussions but also helps in making informed decisions based on solid evidence.