Most teams already track a few AI signals in support. The gap is turning those signals into support SLOs from AI that drive daily shifts, triage, and product work. Timers are fine, but they miss effort and risk. If you shape SLOs around conversation signals, you stop guessing and start managing real failure modes.

It’s usually not a tooling problem. It’s a measurement problem. You need clear definitions, evidence you can click, simple runbooks, and a way to prove changes worked. We’ll walk that path, end to end, without making you rebuild your stack.

Key Takeaways:

- Treat AI conversation metrics as SLIs, then set SLOs that reflect effort and churn risk, not just speed

- Start with a tight set: High‑Effort rate, Churn‑Risk density, Transfer rate, plus sentiment drivers

- Define formulas, windows, and error budgets so reporting is consistent and defensible

- Wire alerts to link directly to the exact tickets behind a spike to cut debate and speed triage

- Use simple pre and post windows with guardrails to prove fixes worked, then iterate

- Export metrics to your BI, keep traceability in reach, and review trends with evidence

SLAs Are Not Enough: Build Support SLOs From AI Signals That Reflect Effort And Risk

Speed alone does not define a good support experience. You need SLOs that reflect how hard the customer had to work and whether the conversation hinted at churn. The fix is straightforward. Make AI conversation metrics the indicators you manage to, with evidence one click away.

Why Time-Based SLAs Miss The Real Failure Mode

SLA timers are table stakes, but they do not tell you if the customer struggled, felt ignored, or left frustrated. You can hit response and resolution times while still creating high‑effort loops, transfers, and incomplete answers. That is the cost that shows up later in escalations and renewals.

The real signal lives in the conversation. Frustration cues, negative sentiment drivers, and direct churn mentions capture what the clock cannot. When leaders ask why something dipped, they want the driver, not a surface metric. If you cannot point to the exact quotes behind a trend, trust erodes and decisions stall. We have seen that movie. Nobody is checking the timer first when a renewal is at risk.

When you elevate effort and risk to first‑class targets, teams behave differently. They reduce handoffs, simplify workflows, and write clearer responses. That is what you actually want the timer to produce. Use the timer for guardrails. Use AI signals to measure whether the experience worked.

Optional references you can share with stakeholders: Google SRE on Service Level Objectives and Atlassian on SLA vs SLO vs SLI.

What Changes When You Treat AI Signals As SLIs?

When sentiment distribution, Customer Effort, and Churn Risk become your SLIs, they turn vague debates into specific tradeoffs. Managers stop asking only “did we respond fast enough” and start asking “did we reduce high‑effort loops by plan tier” or “did we catch risk clusters before renewal.” That shift reduces noise.

This also changes incentives. Agents know that transfers and unclear replies show up as effort. Product sees which workflows drive negative sentiment and risk. Leadership discusses error budgets for effort spikes instead of only SLA breaches. You move from chasing scores to fixing drivers. The data finally matches the work you need to do.

One more benefit that is easy to miss. With evidence linked to every metric, coaching gets faster. You can open three tickets that show the pattern, align on what “good” looks like, and update macros or policies the same day.

What Does Efficiency Look Like In Conversation Data?

Efficiency is not a vibe. Write a clear definition teams can rally around. For most support orgs, it includes fewer high‑effort tickets per 1,000 conversations, low transfer rates, stable or improving sentiment, and reduced churn‑risk density in key cohorts. That is concrete and measurable.

Spell out the units and time windows. Seven day rolling windows work well for operations. Thirty or ninety day windows help with strategy and trend reviews. Then set baselines from the last 90 days so targets are ambitious and believable. If you are starting from scratch, run a record‑only period first. You will avoid setting thresholds that trigger constant false alarms.

Let’s pretend last quarter’s median was 32 high‑effort tickets per 1,000. Set an initial SLO at 30, with a small error budget for incidents, then tighten after you ship your first fixes. It is not about being perfect. It is about reducing the real failure mode at a pace the team can sustain.

Ready to stop guessing and start measuring what matters in support? See How Revelir AI Works.

Choose The AI Metrics That Map To Efficiency For Support SLOs From AI

Pick signals you can influence within a quarter. Sentiment, Customer Effort, Churn Risk, and Transfers map directly to coaching, workflow, and product changes. Make sure each metric links to the exact tickets behind it so you can validate fast and coach with context.

Selection Rules: Map Metrics To Controllable Levers

Your first set should map to actions you already know how to take. Sentiment shows emotional tone, which responds to copy and policy fixes. Customer Effort points to workflow friction, which responds to better macros and clearer paths. Churn Risk flags retention exposure, which drives CSM outreach and product prioritization. Transfers reveal coordination overhead you can reduce.

Pick metrics with evidence at hand. If managers can click from a chart to the exact conversations, they can verify patterns and decide. Without that traceability, you risk shipping the wrong fix. One more rule we use ourselves. If a metric does not show up in a weekly review with a clear next step, it is probably not worth tracking right now.

Also consider your data’s shape. Effort depends on enough back‑and‑forth to be trustworthy. When volume or transcript depth is thin, leave the field empty rather than guess. That safeguard avoids misleading calls and rework.

Which AI Signals Belong In Your First SLOs?

Start small. Choose two or three core metrics so owners and runbooks are clear. High‑Effort rate is a must. Churn‑Risk density focuses attention where it matters most. Transfer rate keeps handoffs in check. Add sentiment drivers to explain the “why” behind dips without turning this into a score‑watching exercise.

If your domain needs it, include one custom AI metric that reflects your language, like “Expectation Mismatch” or “Refund Friction.” Keep the initial set tight, then expand once you prove the loop from metric to fix to impact. It is tempting to add five new targets. Resist that urge. Complexity is a hidden cost.

For more context, share a primer like Nobl9 on SLO metrics during onboarding. It helps align terms without derailing the decision.

Anti-Patterns To Avoid

Avoid vanity signals that cannot be traced back to conversations. If you cannot open three tickets that show the pattern, skip it for now. Do not over‑index on agent‑only metrics that ignore customer impact. You will optimize behavior without improving outcomes. That is the wrong trade.

Also avoid rolling five new SLOs into a single cycle. One noisy metric can drown alerts and waste hours. If a metric’s definition is still in flux or coverage is spotty, pause it until your taxonomy is ready. Confidence beats breadth here. It always does.

If you need a quick test, ask this out loud. “When this metric moves, do we know who acts and what they do first?” If the answer is no, it is not SLO material yet.

Define SLOs And SLIs From Conversation Signals For Support SLOs From AI

Turn each AI signal into a crisp SLI with a formula, window, and rounding rule. Then set SLO thresholds, error budgets, and owners so action is automatic. Start with a record‑only period before you codify targets to avoid noise.

Convert Signals To SLIs: Definitions And Units

Definitions remove debate. Write them like engineering specs. High‑Effort Rate equals the percent of conversations labeled High Effort in a seven day rolling window. Churn‑Risk Density equals risk flags per 1,000 conversations for the segment you care about. Transfer Rate equals tickets with two or more reassignments divided by total tickets.

Add rounding rules and exclusions. If tickets reopen within 24 hours, count them once. If a dataset lacks enough back‑and‑forth to score effort, keep the field empty instead of forcing a value. That safeguard prevents misleading classifications and keeps trust high. Small detail, big payoff.

Document where people click to validate. In practice, that means linking the SLI to a saved view that opens the underlying conversations. If a number looks wrong, someone should be one click from reading the quotes that created it.

How Do You Set Thresholds Without Historical Baselines?

Use a staged approach. First, run record‑only for two weeks to see natural distributions. Second, adopt a wide target, like p95 High‑Effort Rate at or below last month’s median. Third, tighten by 10 to 20 percent after your first fixes ship. You will earn confidence before you raise the bar.

Set error budgets so incidents do not cause whiplash. For example, “no more than 30 high‑effort tickets per 1,000 in a quarter” gives managers room to handle spikes without breaching every week. If a specific category keeps burning the budget, make that visible in the review and allocate a fix.

If stakeholders want a reference, point them to Datadog SLO documentation for language on targets and budgets. It grounds the conversation.

Example SLO Catalog For Support Efficiency

Catalogs make SLOs tangible. Keep it lightweight and specific, then assign owners.

- High‑Effort tickets under 25 per 1,000 conversations over a 30 day window (owner: CX Ops)

- Churn‑Risk under 8 per 1,000 for Enterprise accounts over a rolling quarter (owner: CS)

- Transfer Rate under 12 percent for Billing category over a 30 day window (owner: Support Manager)

- Negative Sentiment below 18 percent for New Customers cohort over a 30 day window (owner: CX Analytics)

Each entry should include the measurement window, breach condition, and a link to the runbook so action is immediate.

Want outcomes in minutes, not months? Evidence‑backed metrics and drill‑downs make that possible. Learn More.

Wire Monitoring, Alerts, And Ticket Traceability To Run Remediation, Not Fire Drills

Monitoring only works if people trust the spike and know what to do next. Alerts should link directly to the exact tickets behind the metric. Reviews should focus on slow drift and root causes. That split keeps urgency where it belongs.

Instrumentation That Preserves Evidence

Dashboards are useful, but they must click through to conversations. When an SLO moves, the on‑call manager should be one link from the filtered set of tickets that drove the change. That path reduces debate and turns meetings into decisions.

Export the metrics to your BI or monitoring tool, then keep the evidence path intact with saved views. Require that every alert includes a link to the validating slice. You will shorten triage, reduce rework across shifts, and coach with real examples. It sounds simple. It is. It is also the difference between trust and churn.

As you design the views, mirror how your team thinks. Include filters for driver, canonical tag, segment, and timeframe. People default to their mental model under pressure. Meet them there.

What Should Trigger An Alert Versus A Weekly Review?

Define two classes. Alerts fire on fast‑moving risk. Reviews cover gradual drift. That line keeps noise down.

For alerts, consider thresholds like these:

- Churn‑Risk density up two standard deviations over 24 hours

- Transfer Rate exceeding target by 50 percent for two consecutive days

- High‑Effort rate doubling in any single category within a day

For reviews, look at weekly sentiment drift or slowly rising effort in onboarding. These patterns matter, but they do not require paging a manager. If you are unsure, ask whether waiting 48 hours would change the outcome. If not, put it in the review.

Fast Remediation Playbooks, Routing, And Recovery

Runbooks should be short, specific, and owned. When High‑Effort breaches in a category, pause auto‑replies for that topic, route to a named squad, and post a one paragraph agent note that shortens back‑and‑forth. When Churn‑Risk spikes, flag CSMs with the affected accounts and sample quotes, then open a product ticket if a driver repeats.

Close with a micro‑experiment you can ship this week. Update a macro, fix a form, or adjust a policy. Then watch the metric and read a handful of tickets in the affected slice. If the numbers say it improved but the quotes sound off, you have work left. That is not failure. That is the loop working.

Measure Impact Without Guessing: Experiments, Windows, And Statistical Checks For Support SLOs From AI

You do not need a PhD to prove a fix worked. Use matched pre and post windows, segment by the driver you targeted, and add simple guardrails. Then read a few tickets to sanity‑check the numbers. If the story and the stats disagree, dig.

Pre/Post Windows And Guardrails

Pick a fixed pre window, like 28 days, and a matched post window after the change ships. Segment by driver or canonical tag to avoid dilution. If volume is low, lengthen the window so variance does not swamp the signal. This keeps you honest without slowing the team.

Add guardrails that protect conclusions. Set a minimum conversation count before you declare a win. Exclude holidays or campaign spikes that do not reflect normal traffic. Write a single decision question up front so the analysis stays focused. Example: “Did the updated billing macro reduce High‑Effort in Billing by at least 20 percent?”

You will avoid the common mistake of overfitting to a noisy week.

How Do You Prove The Fix Worked?

Use paired comparisons on the same segments you targeted. Track absolute and relative change in the SLI, like High‑Effort Rate down 20 percent in Billing. Where possible, add a confidence interval or at least a minimum detectable effect threshold based on volume. This helps you avoid calling noise a win.

Then validate in the transcripts. Open five to ten tickets from the post window and read them. Do they sound easier, clearer, less frustrated? If the numbers and the quotes agree, you are done. If not, tune the fix and rerun the window. We have had to do this ourselves. It is normal.

If your stakeholders want a reference, this short overview from Coralogix on service SLOs can help ground the approach.

Reading Noise Versus Signal

Not every swing is meaningful. If weekly counts are small, a few tickets can skew a rate. Lengthen the window or group similar categories to get stable reads. Avoid chasing single‑day spikes unless they trip your incident criteria. That is how teams burn out and still miss risk.

Build a cadence. Weekly reviews for drift, daily checks for incidents, and monthly reviews for strategy. Over time, people learn what moves the numbers and you ship better fixes faster. Progress beats perfection here. Always.

How Revelir AI Operationalizes Support SLOs From AI In Your Stack

Revelir AI turns 100 percent of your conversations into structured metrics you can trust, then keeps the path back to the source visible. It is not another dashboard. It is the measurement layer and the evidence that makes SLOs workable in the real world.

Full-Coverage AI Metrics With Evidence You Can Click

Revelir AI processes every conversation, not a sample, and generates Sentiment, Churn Risk, Customer Effort, and custom AI Metrics. Each aggregate links to the exact tickets and quotes that produced it. That traceability closes the trust gap that kills initiatives in review meetings.

This matters operationally. Manual validation drops from hours to minutes because managers can open the validating slice and read representative quotes on the spot. It also matters culturally. When every claim can be traced to the words customers actually said, priority debates get shorter and fixes ship faster. We have watched teams move from narrative to numbers, then back to narrative with evidence intact. That is the point.

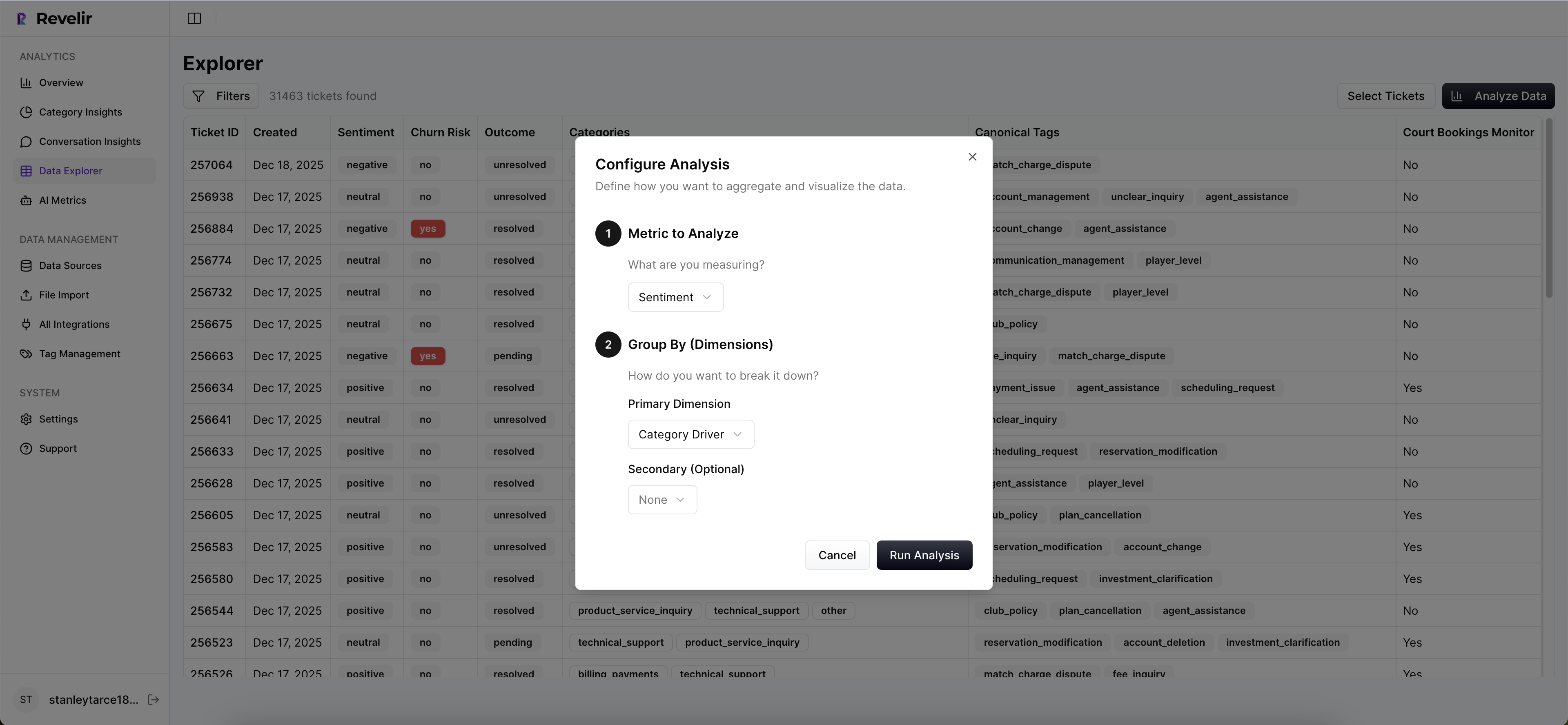

Data Explorer, Drivers, And Rapid Pivoting For SLOs

With Revelir AI, you pivot SLIs by driver, canonical tag, or segment in Data Explorer to isolate where effort or risk concentrates. Drivers give you the strategic lens leadership expects. Canonical tags keep reporting clean. Raw tags support discovery without breaking structure. Together, they turn scattered issues into themes you can prioritize.

The workflow is simple. Filter the dataset, run an analysis to see grouped results, then click into the conversations behind any cell to validate the pattern. When a slice looks wrong, adjust tags or custom metrics and watch the mapping improve over time. That feedback loop keeps the data aligned with your business language without heavy manual tagging.

Here is the practical payoff. Your SLO reviews lead to specific product and workflow changes, not vague to‑dos. And when someone asks “show me,” the proof is one click away.

How Does Revelir AI Shorten Time From Alert To Fix?

Revelir AI does not replace your monitoring tools. It supplies the measurement layer and the evidence path. You export metrics to your dashboards, then click back into Conversation Insights to coach agents and confirm improvements. Data Explorer is where most of the day‑to‑day analysis happens because it feels like a pivot table built for tickets.

Tie this back to the costs we discussed earlier. Sampling created delay and bias. Black‑box scores eroded trust. Manual QA ate hours that should have gone to fixes. With Revelir AI, full coverage eliminates blind spots, traceability answers “show me,” and drill‑downs make validation fast. The transformation is straightforward. Remediation happens inside the same week, not next quarter.

Want to see this workflow on your own data with metrics you can trust the same day? See How Revelir AI Works.

Conclusion

If your support strategy still hinges on timers and a handful of sample reads, you will miss the patterns that matter. Build support SLOs from AI conversation signals, keep evidence one click away, and run small, fast experiments that prove impact. Start tight, tune definitions, then raise the bar. You will reduce effort, catch risk earlier, and make better product calls without guessing.

Frequently Asked Questions

How do I set up custom AI metrics in Revelir AI?

To set up custom AI metrics in Revelir AI, start by defining what specific metrics you want to track that align with your business language. This could include metrics like 'Customer Effort' or 'Reason for Churn.' Next, navigate to the metrics configuration section in Revelir and input the questions you want the AI to answer, along with the possible values for each metric. Once you've defined these, Revelir will automatically apply them to your conversations, allowing you to analyze the results in the Data Explorer. This setup helps tailor the insights to your specific needs and enhances your ability to make data-driven decisions.

What if I want to analyze churn risk for specific customer segments?

If you want to analyze churn risk for specific customer segments in Revelir AI, first filter your ticket dataset by the desired customer segment using the Data Explorer. You can apply filters based on metadata such as customer tier or plan type. After filtering, click on the 'Analyze Data' button, select 'Churn Risk' as the metric, and group the results by 'Driver' or 'Canonical Tag.' This will provide insights into which issues are most associated with churn risk for that segment, allowing you to take proactive measures to address those concerns.

Can I validate AI metrics with real conversation examples?

Yes, you can validate AI metrics with real conversation examples in Revelir AI. After running your analysis in the Data Explorer, you can click on any metric segment to access the underlying tickets. This feature allows you to view full conversation transcripts, AI-generated summaries, and the assigned metrics for each ticket. By reviewing these examples, you can ensure that the AI classifications make sense and align with the actual customer experiences, providing a trustworthy basis for your insights.

When should I refine my tagging system in Revelir AI?

You should consider refining your tagging system in Revelir AI whenever you notice inconsistencies or redundancies in your current tags. This can happen after analyzing your data and identifying patterns that don't align with your business needs. To refine your tags, start by mapping messy raw tags into a smaller set of meaningful canonical categories. Clean up any redundant or legacy tags, and ensure that your tagging system evolves with your support data. Regular refinement helps maintain clarity and accuracy in your insights, making it easier for your team to report and act on findings.

Why does Revelir AI emphasize evidence-backed metrics?

Revelir AI emphasizes evidence-backed metrics because they provide a trustworthy foundation for decision-making. By processing 100% of your support conversations, Revelir ensures that insights are based on complete data rather than sampled subsets, which can miss critical signals. Each metric is traceable back to the exact conversations that generated them, allowing teams to validate findings with real examples. This transparency builds trust among stakeholders and helps prioritize product improvements and customer experience initiatives based on solid evidence.