Support leaders tell me the same thing in first calls: you want to operationalize sentiment alerts without waking the team for noise. You want precision, proof, and a short path from alert to action. You also want to keep trust intact. That’s the heart of this piece, a concrete way to operationalize sentiment alerts so your queue stays focused and your best people don’t mute the channel.

If you only take one idea, make it this: you operationalize sentiment alerts by defining “actionable negative” first, not by piping raw scores into an incident room. Probability, drivers, and quotes turn a label into evidence. Business context turns evidence into priority. Do those well and you’ll move faster with fewer mistakes.

Key Takeaways:

- Treat “negative” as a hypothesis until you attach probability, drivers, and a quote

- Pair sentiment with context like account tier, renewal window, and active escalations

- Measure precision weekly, not just volume, or you’ll miss where noise creeps in

- Process 100 percent of conversations so you do not hide patterns in unmonitored channels

- Calibrate thresholds on recent data and adjust after major product changes

- Blend model confidence with business impact to rank what gets human eyes first

- Keep a fast human QA loop on Sev 1 to preserve trust and shorten time to action

Why Raw Sentiment Alerts Break Trust

Raw sentiment labels trigger noise because they are not evidence. An alert must answer three questions in seconds: how confident is the model, what is driving the emotion, and where can I verify the claim. When you ship those three together, you cut debate and shorten the path to the right fix.

Scores Are Not Alerts, Evidence Is

An alert is actionable only when it carries proof, not just a color. Start with calibrated probability on the negative class, then attach the top driver tags, and include a verifiable quote from the conversation. If an agent cannot click straight to source context, your next standup turns into a debate about accuracy.

Most teams skip this and pay for it later. You see a red label with no driver, then you dig for five minutes to find the transcript. By the time you get there, the pattern is weak or off-topic. That’s how alerts lose credibility. Better to declare the rule up front: no alert fires without probability, drivers, and a quote, period.

A simple checklist helps after context is set:

- Probability meets the tier threshold, and the model is recent

- Top drivers explain why this is negative in plain English

- One quote shows the issue clearly, with a link to the ticket

Tie “Negative” To Business Impact

Not every negative is equal. A midnight complaint from a low‑value plan is not the same as a high‑value renewal at risk during business hours. Pair sentiment with account tier and renewal window, then factor current escalations. This is how you protect attention and spend the team’s time where it matters.

Define explicit criteria. For example, you might only alert when probability of negative exceeds 0.75 and the account is enterprise or within 60 days of renewal. That rule prevents queue floods from low‑impact spikes. It also sets a shared language with leadership about what deserves a page versus a daily summary.

One more nuance we like: include a “suppression list” for known low-severity, high-volume drivers that cause noise. You can still track them, but they do not page anyone.

The Case For Evidence When You Operationalize Sentiment Alerts

Precision makes alerts trusted. Coverage makes them complete. You need both. Precision is the share of fired alerts that humans agree are truly actionable. Coverage is the percent of conversations under watch. If either is weak, you get bias, waste, and misses that hurt renewal and morale.

What Does Precision Really Mean For Alerts?

Alert precision tells you how often the system is right when it asks for attention. Set a target, like 80 percent precision on Sev 1, then measure it weekly with a light QA sample. Precision jumps when alerts include calibrated probability, clear drivers, and a link to the source conversation.

Make this visible. Track precision by tier, by driver, and by channel. If one driver drags precision down, tune thresholds or exclude it from paging. If you want background, this framing aligns with how IBM explains practical sentiment analysis in CX. They stress context and calibration, not just labels.

Two quick patterns to avoid:

- Blind confidence thresholds with no recent validation set

- “Any negative gets a Sev 1” policies that flood your best people

Coverage Eliminates Blind Spots

You cannot trust your metrics if you only watch a slice of the queue. Process 100 percent of conversations so emerging drivers are visible across channels, cohorts, and product areas. Sampling invites bias and delay, which is why simple score-watching rarely tells you what to fix.

Full coverage also changes the conversation with stakeholders. You can pivot by plan tier or driver and still click into quotes to prove the pattern. That mix is what gets decisions made. For context on why this matters, see the way Zendesk frames customer sentiment as an always-on lens, not an occasional report.

When you have coverage and precision together, you stop arguing about representativeness and start deciding what to fix first.

The Hidden Cost Of Trying To Operationalize Sentiment Alerts Without Guardrails

The mistakes are predictable. Noise, misses, and slow validation time pile up. If you do not measure that cost, the team will live with it quietly and burn out. Once you put numbers on it, you can tune thresholds, rewrite runbooks, and get hours back.

Quantify False Positives And Misses

Start by putting a price on attention. Let’s pretend last week 120 alerts fired, 65 were false positives, and 9 serious negatives were missed. That is 54 percent wasted attention and 9 risky delays. Now add mean time to validate, say 7 minutes per Sev 1. You just burned 7.6 hours on mistakes and pushed nine problems downstream.

Track three core metrics weekly:

- False positive rate by tier and by driver

- Miss rate on known-severe segments, like enterprise renewals

- Mean time to validate, the silent tax that frustrates everyone

If you want a deeper read on the math behind classification tradeoffs, this short paper on calibration and thresholds is helpful context: arXiv 2010.13684.

Noise Creates Burnout And Slower Response

High-noise alerts force agents to re-check context, then re-route manually. Managers lose an hour a day adjudicating edge cases. Over a month, that erodes trust and stretches SLAs. You see it in escalations and CSAT. The worst part is the learned behavior: people mute channels, then the alert that mattered slips by.

We have also seen leaders hesitate to act when they cannot trace an alert to a quote. Confidence evaporates quickly. The fix is not another dashboard. It is transparent, evidence-backed alerts that reduce second guessing and let the team move.

You do not need to boil the ocean. Pick one tier, tune it, then move to the next.

What Alert Fatigue Feels Like When You Operationalize Sentiment Alerts Poorly

Alert fatigue is not abstract. It is how the day feels. On the floor, it looks like guesswork. In leadership meetings, it sounds like stalled decisions. Both are avoidable if your alerts carry proof and your rules treat impact as a first-class input.

On The Floor, It Feels Like Guesswork

You see a red banner, then a thin label with no context. You click three times to find the transcript. It is not even your product area. Frustrating rework follows. After a week of this, you mute the channel. Then the one alert that mattered slides by because nobody is watching at 4 pm.

So why does this happen? Because nobody is checking probability, drivers, and quotes before paging. And nobody is filtering by impact. Fix those two, and the floor stops playing detective and goes back to helping customers.

Here is the simple test agents love: can I understand why this fired in 10 seconds or less.

In Leadership Meetings, Confidence Evaporates

You show an escalation trend and get the question everyone asks: where did this come from. If you cannot open a filtered view of tickets and read two quotes that match the claim, the room stalls. Evidence beats anecdotes every time. Bring a slice that shows negative sentiment by driver, for enterprise, with real examples.

That proof changes the energy in the room. Product leaders stop arguing edge cases and start sizing the fix. Finance stops questioning the sample and starts asking about impact. If you want a quick primer that mirrors this approach, SupportLogic’s overview on sentiment analysis benefits in support is a useful outside reference.

We have all been in the other kind of meeting. Nobody wants to go back.

How To Operationalize Sentiment Alerts With Precision

You build precision by design, not by accident. Define severity and SLOs. Calibrate thresholds on recent data. Blend confidence with business impact to rank the queue. Then route to the right channel and keep a tight human QA loop. This is the part you can implement this month.

Set Severity Tiers, SLOs, And Alert Objectives

Start by agreeing on what deserves attention and how fast you will respond. Name your tiers, write the SLOs, and connect them to business impact so everyone understands the why.

Then document clear examples before you flip the switch. A simple starting framework:

- Define tiers and SLOs: Sev 1 acknowledge in 15 minutes, Sev 2 same day, Sev 3 within 48 hours

- Require evidence per alert: probability threshold, drivers, and a quote

- Add impact filters: enterprise accounts or renewal within 60 days

- Keep a suppression list for noisy, low-severity drivers that do not page

One interjection before we move on. If a rule causes noise, change it in writing and tell the team why.

How Should You Calibrate Models And Thresholds?

Calibrate on recent data. Plot precision and recall across thresholds for negative sentiment, then pick operating points that hit your tier goals. For example, 0.85 for Sev 1, 0.70 for Sev 2, with guardrails for known noisy drivers.

A practical cadence:

- Build a small validation set with human labels from the last 30 days

- Plot precision and recall by threshold and pick targets per tier

- Add rule-based overrides only if they lift precision on recent data

- Re-check thresholds monthly and after product or policy changes

If you want more market context, Zendesk’s take on customer sentiment reinforces the need for continuous tuning, not set-and-forget scores.

Confidence-Weighted Prioritization With Business Context

Not every negative needs the same response. Compute a simple score that blends model confidence with impact so you sort by what moves the business, not just what is most certain.

A practical blend:

- 0.6 times sentiment probability

- plus 0.2 if churn risk is yes

- plus 0.15 if account is enterprise

- plus 0.05 for a recent ticket volume spike

Sort queues by this score. Send low-impact alerts to summaries instead of paging.

Route, Escalate, And Keep A Human In The Loop

Map tiers to channels and runbooks so nobody wonders where to act. Require a fast human QA action on every Sev 1 so you keep trust high and shorten time to fix.

A clean routing pattern:

- Sev 1 to an incident room with senior agents

- Sev 2 to a priority queue with clear owners

- Sev 3 to a daily summary for triage

- For Sev 1, approve or downgrade within 5 minutes with a one-click reason code

- Sample 10 to 20 percent of Sev 2 and 3 weekly for review and tuning

Log every decision. You will use that data to adjust thresholds and prove improvement.

Ready to stop chasing noisy labels and start shipping evidence-backed alerts? See how Revelir AI works.

How Revelir AI Powers Operationalized, Auditable Sentiment Alerts

Revelir AI turns support conversations into evidence-backed metrics at full coverage. It assigns Sentiment and Churn Risk, supports Customer Effort when data quality allows, and preserves a click-through path to exact quotes. That means your alerts can carry confidence, drivers, and proof by default.

Evidence-Backed Measurement You Can Trust

Revelir processes 100 percent of conversations, then stores structured metrics for every ticket. Each aggregate links back to the exact conversation and quote, so you can answer the “show me” question in seconds. When someone asks why this fired, open Conversation Insights and read the lines that matter.

In practice, this changes behavior. Leaders see the number, then the words behind it. Product stops doubting the sample and starts sizing fixes. Support stops re-reading tickets just to validate an alert that never should have fired.

The Analytics Layer Behind Precision And Tuning

Use Data Explorer to segment negatives by driver, plan tier, or product area, then validate model behavior on recent windows. It is the fastest way to pick thresholds that hit precision targets, find high-noise drivers to exclude from paging, and monitor drift with grouped analyses.

Two workflows matter most:

- Analyze Data to run sentiment by driver or churn risk by category

- One click into Conversation Insights to confirm examples match the pattern

Evidence first, edits second. That is how you tune without drama.

3x faster threshold tuning, fewer noisy pages. Want to see it on your data? Learn More.

Connect To Workflows Without Ripping And Replacing

Revelir fits into the stack you already use. Export metrics via API to paging or routing systems so alerts carry calibrated probabilities, key drivers, and links back to source evidence. Keep agents in the helpdesk they know while Revelir provides the measurement and validation layer.

If your rational drowning math showed 7 minutes wasted per manual review, Conversation Insights turns that into a quick skim with proof in one place. Less hunting, more fixing.

Capabilities That Make Alerts Auditable

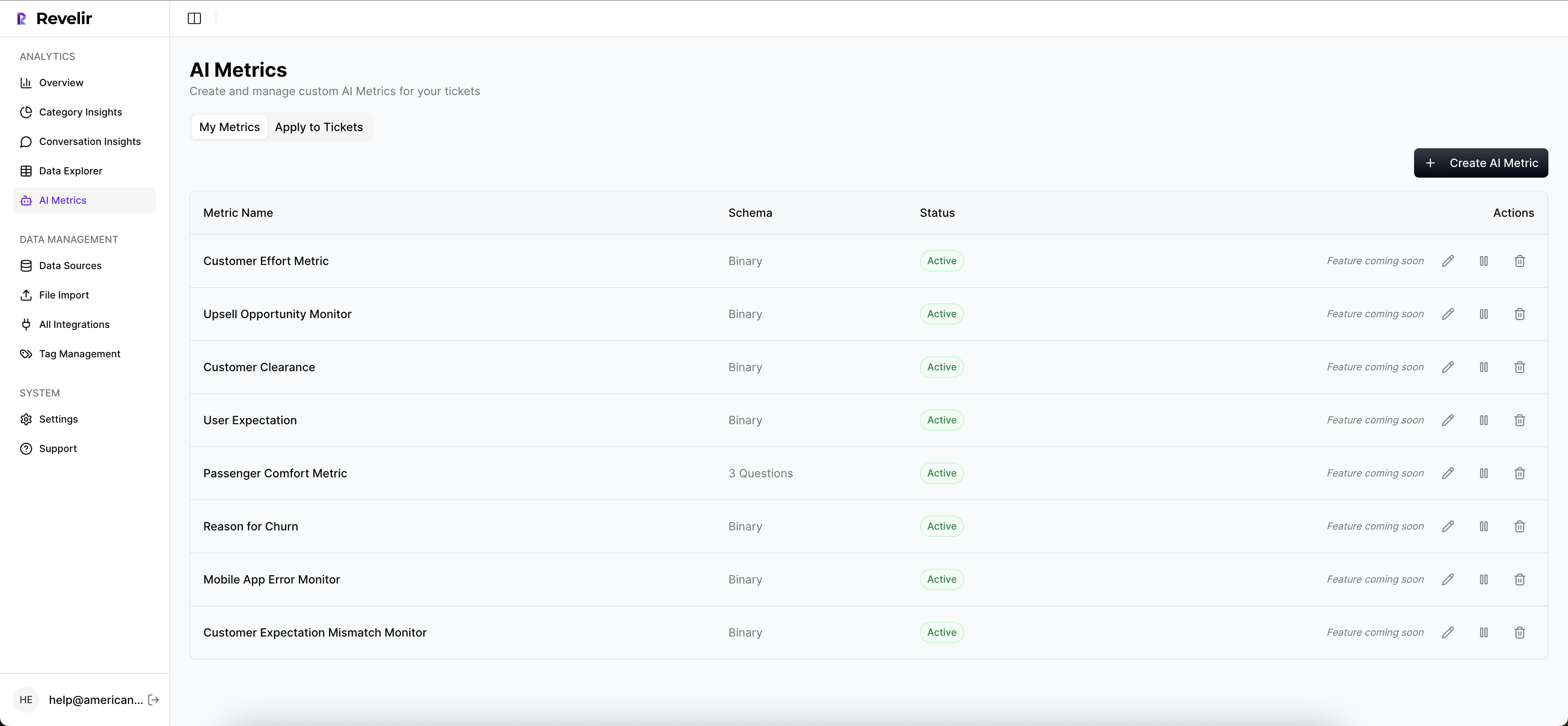

Revelir includes specific features that support precise, trustworthy alerts:

- Full-coverage processing: every ticket analyzed so no cohorts fall through the cracks

- AI metrics and tags: Sentiment, Churn Risk, Customer Effort where supported, plus custom AI Metrics

- Evidence-backed traceability: every aggregate links to the exact quote for fast audits

- Data Explorer and Analyze Data: pivot by driver, tag, or segment to tune thresholds and watch drift

- API export: push structured metrics wherever routing decisions happen

Ready to build an alert pipeline that your team will not mute? Get started with Revelir AI (Webflow).

Conclusion

Operationalizing sentiment alerts is not about more pages. It is about better signals. Define actionable negatives with probability, drivers, and quotes. Add business context so priority reflects impact. Calibrate thresholds on recent data, then blend confidence with risk to rank the queue. Keep a fast human QA loop on Sev 1 so trust never slips.

Revelir AI makes that new way real. Full coverage gives you complete visibility. Evidence-backed traceability makes every alert auditable. The analytics layer helps you tune without guesswork. Put it together and you get fewer mistakes, faster fixes, and a team that trusts the system again.

Frequently Asked Questions

How do I set up Revelir AI with my existing helpdesk?

To set up Revelir AI with your helpdesk, you can either connect directly through an integration (like Zendesk) or upload CSV files of your support tickets. If you choose the integration route, simply link your helpdesk account to Revelir, and it will automatically import historical tickets and ongoing updates. For CSV uploads, export your tickets from your helpdesk, then upload them via the Data Management section in Revelir. This process ensures that all your conversations are analyzed, providing you with structured insights quickly.

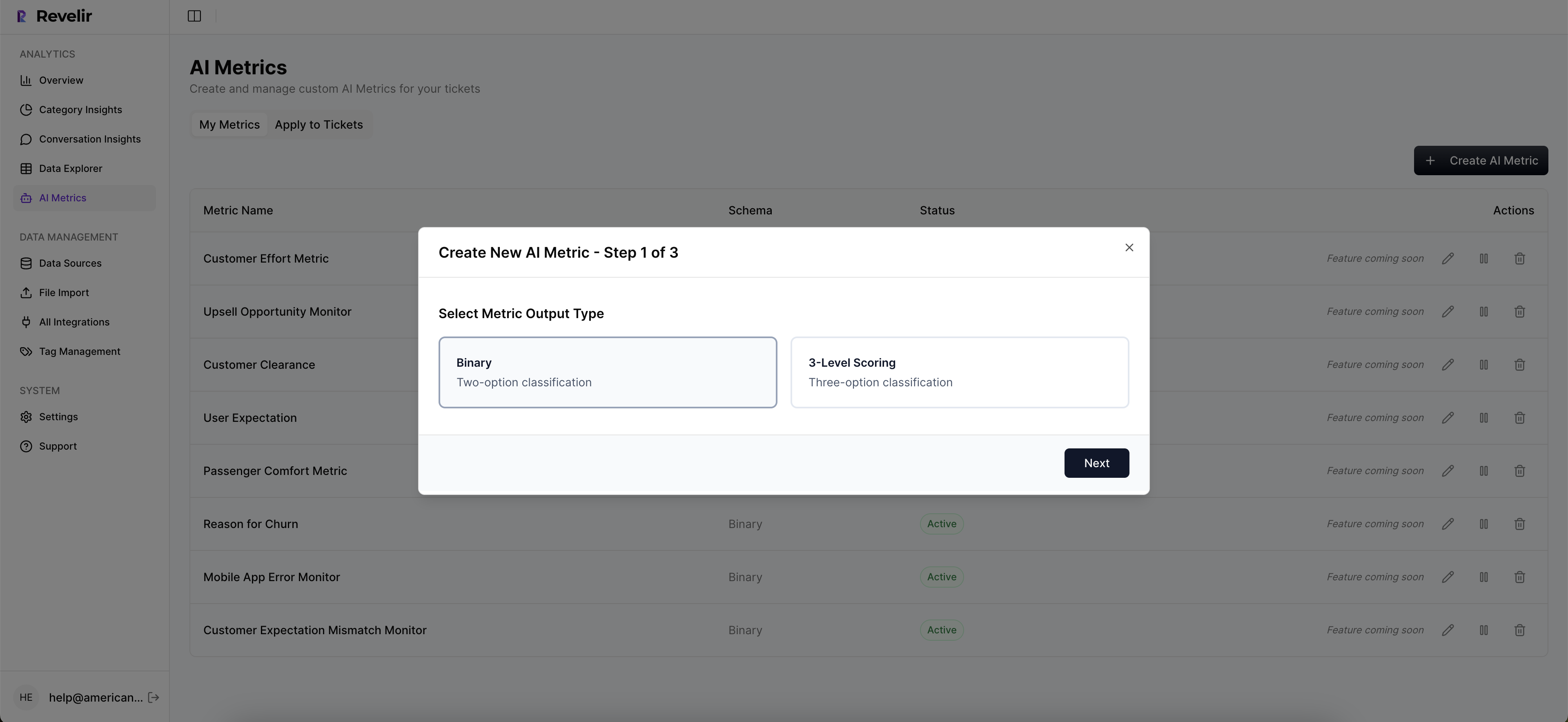

What if I want to customize metrics in Revelir AI?

You can customize metrics in Revelir AI by defining your own AI Metrics that align with your business needs. For instance, you might want to track metrics like 'Customer Effort' or 'Reason for Churn.' To do this, go to the metrics configuration section and specify the metrics you want to enable. Revelir will then apply these custom metrics consistently across your conversations, allowing you to gather insights that are meaningful for your specific context.

Can I validate insights from Revelir AI with real conversations?

Yes, validating insights with real conversations is a key feature of Revelir AI. You can use the Conversation Insights tool to drill down into specific metrics or categories. For example, if you notice a spike in negative sentiment, you can click on the corresponding metric in the Data Explorer to view the actual conversations behind it. This allows you to ensure that the insights align with the context of the conversations, providing a trustworthy basis for decision-making.

When should I adjust the thresholds for alerts in Revelir AI?

You should consider adjusting the thresholds for alerts in Revelir AI after major product changes or when you notice shifts in customer sentiment patterns. Regularly calibrating these thresholds based on recent data can help you maintain the precision of your alerts. For example, if you launch a new feature and receive unexpected feedback, it might be time to reassess what constitutes an 'actionable negative' alert to ensure your team remains focused on the most relevant issues.

Why does Revelir AI focus on 100% conversation coverage?

Revelir AI focuses on 100% conversation coverage to eliminate bias and ensure that no critical signals are missed. By analyzing every support ticket, Revelir can capture frustration cues, churn mentions, and product feedback that might be overlooked with sampling methods. This comprehensive approach allows teams to make data-driven decisions based on complete insights, rather than relying on potentially misleading samples or trends.