You’re probably still looking at a CSAT dip and pretending it tells you where to fix the business. It doesn’t. If you’re serious about intent-based ticket routing, the real issue usually starts one layer earlier: your team doesn’t have a clean way to classify what the customer is actually asking for.

A lot of support ops teams keep adding rules, queues, and exception handling because they think routing is a workflow problem. Same thing with manual triage. Same thing with “smart” inbox setups. It feels operational, but it’s really a classification problem first and a routing problem second.

Key Takeaways:

- Intent-based ticket routing only works when intent definitions are tighter than queue names.

- If confidence is below a set threshold, route to a safe fallback queue instead of forcing a bad guess.

- A simple intent-to-queue matrix can cut misroutes faster than adding more keyword rules.

- Sampling ticket data gives you false confidence. Routing models need 100% conversation coverage.

- Custom metrics matter because your business language rarely matches off-the-shelf labels.

- Every routing decision should be traceable back to the original ticket text.

- Rollout works best when you A/B test one high-volume queue first, then expand.

If you want the fast version, Learn More. The short version is this: better routing comes from better classification, not more queue logic.

Why Ticket Routing Keeps Breaking Even When You Add More Rules

Intent-based ticket routing breaks when teams treat labels as admin cleanup instead of operational logic. The first thing to fix is not the queue map. It’s the way you define, detect, and validate customer intent across the full ticket stream.

Let's pretend you're a support lead at a SaaS company with 18,000 tickets a month. Monday morning. Zendesk is full, agents are reassigning tickets by hand, product is asking why onboarding complaints spiked, and nobody's checking whether “billing issue” actually means refund request, invoice confusion, failed card update, or enterprise procurement hold. Those are not the same thing. But they get routed like they are.

The queue map usually hides the real problem

Most routing setups look organized from far away. You’ve got Tier 1, Billing, Technical, VIP, maybe onboarding, maybe cancellations. Fine. But those are destination buckets, not intent definitions. And when your system routes based on shallow keywords or old helpdesk tags, it collapses distinct customer needs into broad buckets that agents have to unravel later.

That’s where the Intent Precision Gap shows up. It’s a simple framework: if one label maps to more than three materially different customer requests, that label is too broad to route from. “Billing” almost always fails this test. Same thing with “technical issue.” If the label is broad enough to sound tidy in a dashboard, it’s usually too vague to drive good routing.

Manual reviews make this worse. Not because people are careless. Because humans normalize ambiguity. One lead sees “can’t log in” as access. Another tags it as authentication. A third sends it to product because the customer mentioned SSO. Fair point. Humans catch nuance. But once three people make three different routing choices on the same type of ticket, your queue logic is already drifting.

Sampling gives you fake confidence

Sampling doesn’t fix intent-based ticket routing. It hides routing risk. If you review 100 tickets out of 10,000 and conclude your rules are “mostly fine,” you’re making a queue decision with partial eyesight.

The math gets ugly fast. A niche but expensive intent can live inside 2% of conversations. If those are upgrade-blocking bugs, compliance questions, or churn-risk billing complaints, missing them means the routing model looks healthy while the business takes the hit. The sample says stable. The escalations say otherwise.

This is where a lot of teams get stuck in score-watching. CSAT dips. FRT slips. Someone says agents need better macros. Maybe. But if ticket routing sends the wrong issue to the wrong queue, every downstream metric gets contaminated. You’re measuring the effects of bad classification and calling it a service problem.

It’s exhausting, honestly. Your agents feel it first. Customers repeat themselves, managers pull tickets out of the wrong queue, and leadership gets a weekly report full of numbers nobody fully trusts. That’s not a workflow issue anymore. That’s a signal quality issue. So the next question is obvious: what does a routing system look like when intent is defined properly?

The Best Intent-Based Ticket Routing Starts With a Taxonomy, Not an Automation Rule

Intent-based ticket routing works when you classify customer requests with enough precision to drive a real operational decision. The starting point is a taxonomy that reflects business meaning, not whatever labels happened to accumulate inside the helpdesk over time.

A lot of teams skip this because taxonomy sounds academic. It isn’t. It’s operating leverage. If your intent model is loose, your routing engine just makes bad decisions faster.

Start with the Intent-to-Action Matrix

The cleanest way to build intent-based ticket routing is with an Intent-to-Action Matrix. Four columns. Intent, destination queue, SLA tier, fallback path. That’s it. If an intent doesn’t change one of those three operational outcomes, it probably doesn’t deserve to be its own routing class.

Here’s the rule I like: if two intents go to the same queue, same priority, and same handling path 90% of the time, merge them. If they change owner, urgency, or workflow, split them. That threshold keeps the taxonomy useful instead of turning it into a theory project.

For example:

- Refund request: Billing queue, 4-hour SLA, fallback to general billing

- Invoice confusion: Billing queue, 24-hour SLA, fallback to billing ops

- Card declined at renewal: Retention or billing priority queue, 2-hour SLA, fallback to urgent billing

- SSO lockout: Technical access queue, 1-hour SLA, fallback to authentication specialists

All four might get lumped into “billing” or “account issue” in a weak setup. That’s the mistake. Different intent should mean different action. If it doesn’t, your intent-based routing model is just relabeling noise.

Worth noting, this is also where business language matters more than generic AI labels. An off-the-shelf model might understand sentiment. Fine. But it won’t naturally know the difference between “passenger comfort issue,” “refund eligibility dispute,” or “plan downgrade due to procurement delay” unless you define those concepts in your own language.

Use the Raw-to-Canonical model instead of forcing one perfect tag

Most teams chase a perfect top-down taxonomy too early. That usually breaks. Customers don’t talk in clean categories. They ramble, stack issues, change subjects mid-thread, and use the wrong words for the right problem. So if you force every ticket into a fixed menu on day one, you miss patterns before you even start.

The better model is Raw-to-Canonical. Raw tags catch the messy language coming out of the conversations. Canonical tags normalize that mess into reporting categories your team can use. Same thing with routing. Let the system detect the granular issue first, then map it to the cleaner operational class.

That’s why hybrid tagging matters. AI can surface emerging patterns humans wouldn’t think to predefine. Humans can then group them into canonical categories that match how the business makes decisions. You need both. Pure manual tagging doesn’t scale. Pure black-box tagging doesn’t earn trust.

I’d argue this is the hidden hinge in good intent-based ticket routing. Not the model itself. The taxonomy memory. If your system learns that “failed 3DS challenge,” “card verification loop,” and “payment auth failure” all belong under a canonical renewal-payment problem, your routing gets sharper over time instead of noisier.

Diagnose maturity before you automate

Before you turn on automation, you need to know what kind of mess you actually have. Use this three-level routing maturity check:

- Level 1: Queue-first routing

- Tickets route by channel, form, or broad keywords

- Misroutes are corrected manually

- Reporting focuses on volumes and SLA misses

- Level 2: Tag-assisted routing

- Teams use tags or macros to improve assignment

- Some recurring intents are recognized

- Edge cases still bounce between queues

- Level 3: Intent-based routing

- Tickets are classified by business-specific intent

- Confidence thresholds determine auto-route vs fallback

- Routing logic and reporting both trace back to conversation data

If you’re stuck in Level 1, don’t start with model tuning. Start by auditing your top 200 misrouted tickets. If you’re in Level 2, look for tags that blur distinct actions together. If you’re in Level 3, focus on confidence thresholds and measurement drift.

That distinction matters. A team with 3,000 monthly tickets can brute-force some manual cleanup. At 30,000, that same habit becomes a staffing tax. So once intent is defined, the next thing that decides whether routing works is confidence. Not every ticket should be auto-routed. Some should be slowed down on purpose.

Confidence Thresholds and Safe Fallbacks Prevent Routing Regressions

Intent-based ticket routing gets safer when you stop forcing every ticket into an automated decision. The practical move is to use confidence thresholds, then send lower-confidence cases to a fallback queue or human review path.

This sounds obvious. It rarely gets implemented well. Most teams either trust the model too much or don’t trust it at all. Both create waste.

Pick thresholds by cost of error, not by optimism

A good threshold is not “whatever feels accurate.” It’s the point where the cost of a bad auto-route becomes higher than the cost of manual review. Different intents need different thresholds because the downside is different.

Use the 3-Tier Threshold Rule:

High-risk intents: 0.85 or higher confidence required Use this for churn-risk complaints, legal issues, VIP escalations, or anything that can materially damage revenue or trust.

Medium-risk intents: 0.70 to 0.84 Use this for common operational flows where an occasional fallback is cheaper than a wrong queue.

Low-risk intents: 0.60 to 0.69 Use this for broad support handling where the consequence of a miss is small and easy to recover from.

If a ticket scores below threshold, don’t guess. Route it to a safe fallback. That fallback could be a general triage queue, a specialist review lane, or a human-assigned routing team. The point is simple: uncertainty is a state, not a failure.

Same thing with multi-intent tickets. One customer writes in about login failure, billing confusion, and missed onboarding expectations in a single thread. If your model insists on one winner with no confidence guardrail, you’ll create false precision. Better to send that case to a mixed-issue fallback path than to misroute it with fake certainty.

Design fallback paths that protect speed

A fallback queue only works if it’s faster than a misroute. That sounds dumb to even say. But I’ve seen teams build “manual review” as a dead end, then act surprised when FRT gets worse.

Use the 15-Minute Fallback Test. If fallback tickets sit untouched for more than 15 minutes during staffed hours, the path is broken. At that point, you’re not creating safety. You’re just delaying assignment.

A workable fallback design usually includes:

- one clearly owned review queue

- visible reason codes for why the ticket was held

- a short list of specialist escalation paths

- weekly review of fallback volume by intent cluster

And yes, you should watch fallback rate. If more than 20% of tickets are landing there after the first few weeks, your taxonomy is too fuzzy or your thresholds are too conservative. If fallback is under 3% but misroutes remain high, you’re trusting the model too much.

If you want a deeper look at how support conversation data becomes structured enough for this kind of analysis, See how Revelir AI works. That’s where a lot of teams finally see why their routing logic has been built on thin inputs.

Instrument every routing decision back to the transcript

You can’t improve intent-based ticket routing if the routing logic is a black box. Every decision needs a trace. What intent was assigned. What confidence score was used. What fallback rule triggered. What phrases in the transcript supported the call.

That traceability rule matters for two reasons. First, it helps ops teams debug mistakes fast. Second, it gives leadership something better than “the model thought so.” In product reviews and support reviews, that difference is huge.

Use this if-then rule: if a routing decision can’t be explained from the ticket text in under 60 seconds, don’t let it control a critical workflow yet. Keep it in observation mode until you can validate it cleanly.

Critics will say this adds complexity. They’re not entirely wrong. It does. But hidden complexity is worse than visible complexity. At least visible complexity can be improved. And once you can see the decision trail, you can finally run a rollout like an operator instead of a gambler.

How to Roll Out Intent-Based Ticket Routing Without Blowing Up Operations

Intent-based ticket routing works best when you roll it out in stages, with clear control groups and hard stop rules. The goal is not to automate everything fast. The goal is to improve routing accuracy without creating a fresh mess for agents.

Honestly, this is where good projects die. Not in taxonomy. Not in modeling. In rollout arrogance.

Start with one high-volume queue and one painful use case

The best first pilot is narrow and expensive. Pick a queue where misroutes already cost real time, then isolate one intent family inside it. Billing is often a good place. Account access can work too. Avoid rare edge cases first. You need volume fast enough to see pattern quality inside two weeks.

Use the 10-20-70 rollout split:

- 10% holdout stays on current routing logic

- 20% shadow mode gets classified but not auto-routed

- 70% test pool follows the new routing path with thresholds and fallback rules

That structure gives you three things at once. A clean baseline. A safe observation layer. And enough live traffic to learn quickly. If you can’t support all three, your rollout is too broad.

A real-world day-in-the-life version of this looks messy. Support ops notices 14% of “billing” tickets get reassigned at least once. Agents say half of them aren’t really billing questions at all. Product thinks checkout is broken. Finance thinks it’s customer education. After two weeks of transcript review, the team splits the queue into refund requests, invoice confusion, renewal payment failures, and contract billing questions. Suddenly the debate gets quieter because the classes are doing actual work.

Measure four numbers, not twenty

Most teams overbuild reporting in week one. Don’t. Track four numbers first:

- Misroute rate

- Average time to first response

- Fallback rate

- Reassignment count per ticket

That’s enough to know whether the system is getting better or worse. If misroutes drop but fallback spikes above 25%, the model may be too cautious. If FRT improves but reassignment holds flat, you may have sped up the wrong first touch. If reassignments drop and FRT drops, now you’ve got something real.

The target outcome is not subtle. A solid implementation can cut misroutes by 40% to 60% and reduce average time-to-first-response by 20% to 30% inside 8 to 12 weeks. But only if you resist the temptation to call the project done after the first decent dashboard.

Use one weekly review cadence:

- Monday: check routing exceptions

- Wednesday: review fallback clusters

- Friday: inspect transcript-backed misses and taxonomy updates

That rhythm matters more than a prettier dashboard. Nobody's checking the taxonomy often enough in most teams. That’s usually the drift point.

Update taxonomy from evidence, not internal politics

This is the part people hate. Taxonomy governance. Because the second routing starts to matter, everybody wants their own category. Product wants feature buckets. Support wants handling buckets. Leadership wants summary buckets. All valid. None of them should control the system alone.

Use the Canonical Governance Rule: only create or split an intent category if it changes routing path, SLA, owner, or reporting action for at least 5% of the affected ticket volume. Below that, hold it as a raw pattern until the evidence grows.

That rule prevents taxonomy sprawl. It also keeps you from building a routing tree around the loudest stakeholder instead of the strongest pattern.

Not everyone agrees with that. Some teams want very detailed taxonomies from day one, and I get the logic. But in practice, early over-detail creates fragile routing. Start tighter. Let evidence earn complexity.

How Revelir AI Makes Support Conversation Data Measurable and Defensible

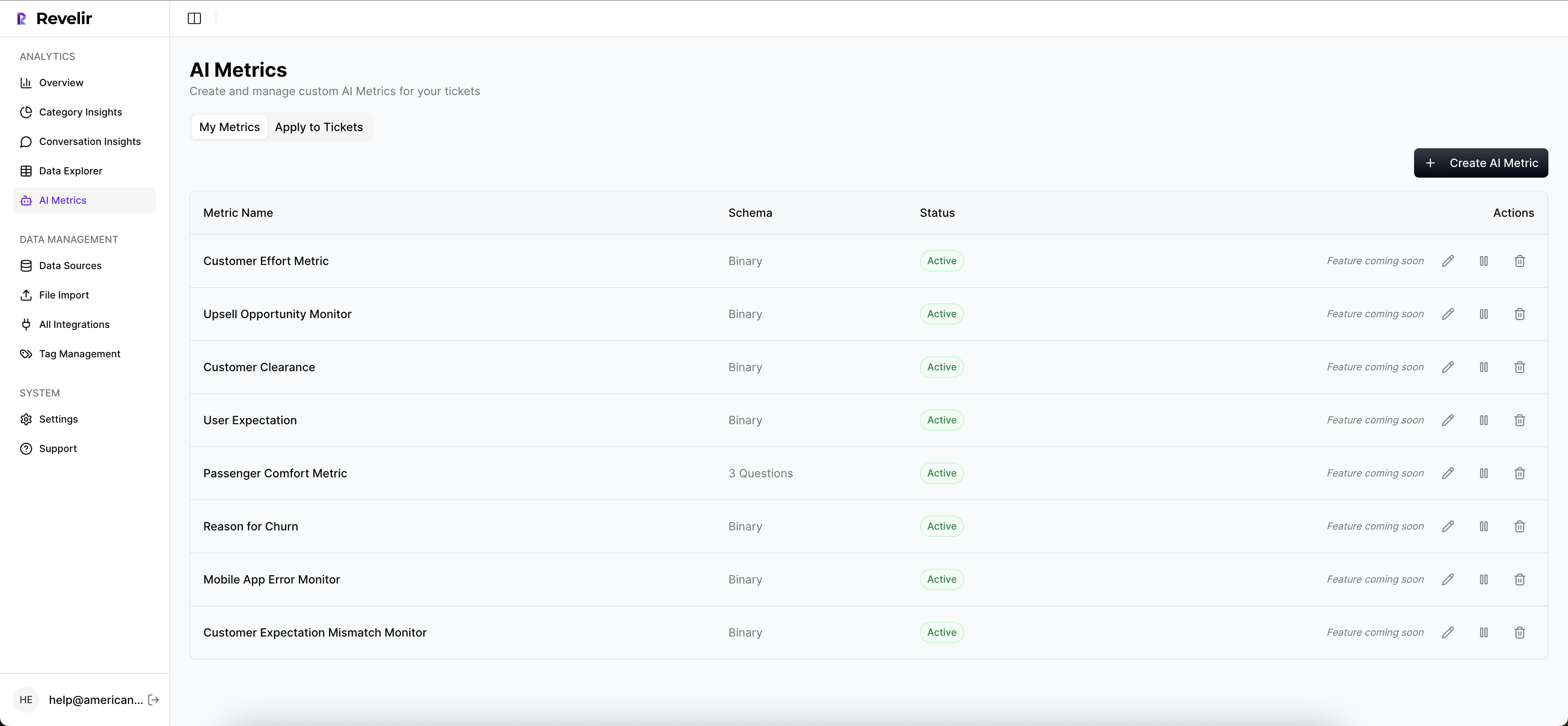

Revelir AI gives teams a way to structure support conversation data so it can be measured, audited, and explored with real evidence. Instead of relying on sampled reviews or generic labels, Revelir AI processes 100% of ingested tickets and turns messy conversation text into tags, drivers, and AI metrics you can actually use.

Custom metrics and hybrid tagging make analysis match the business

Revelir AI doesn’t lock you into a one-size-fits-all model. With Custom AI Metrics, teams can define business-specific classifiers using their own questions and value options. Concepts like renewal risk, invoice confusion, onboarding blocker, or partner escalation can be stored as structured columns and used in analysis.

That works well with Revelir AI’s Hybrid Tagging System. AI-generated raw tags surface the messy, granular patterns showing up in conversations. Canonical tags give teams a cleaner reporting structure aligned to internal language. Users can map raw tags to canonical tags, refine taxonomy over time, and Revelir AI learns those mappings for future tickets.

For support leaders, that’s a meaningful shift. You can analyze tickets in the language your teams and stakeholders already use, without losing the detail that shows up in real conversations.

Full coverage and traceability make decisions easier to trust

Revelir AI also removes the sampling problem that usually undermines analysis. With Full-Coverage Processing, it analyzes 100% of ingested tickets, whether they come through Zendesk Integration or CSV Ingestion for a pilot or backfill. That gives you a complete view of what’s happening in support conversations, not a partial read shaped by whoever had time to review tickets that week.

Then there’s Evidence-Backed Traceability. Every aggregate number links back to the source conversations and quotes. In Data Explorer, teams can filter and inspect tickets across sentiment, churn risk, effort, tags, drivers, and custom metrics. In Analyze Data, they can summarize those metrics by dimensions like Driver or Canonical Tag and connect patterns back to underlying tickets. If you need to validate a pattern or pull examples for reporting, Conversation Insights lets you drill into full transcripts, summaries, tags, drivers, and AI metrics.

That’s what makes the process defensible. Patterns become visible and tied to actual conversations. If you want to put that kind of structure on top of your existing support data, Get started with Revelir AI (Webflow).

Where Better Routing Actually Comes From

Intent-based ticket routing is not really about automation. It’s about classification quality, confidence control, and evidence. When those three pieces are in place, routing gets faster and cleaner. When they aren’t, all you’ve done is automate confusion.

So start there. Tight intent definitions. Confidence-based fallbacks. Full transcript traceability. That’s the path to cutting misroutes by 40% to 60% and reducing time-to-first-response by 20% to 30% in 8 to 12 weeks.

Frequently Asked Questions

How do I ensure my ticket routing is accurate?

To improve the accuracy of your ticket routing, start by refining your intent definitions. Use Revelir AI's Hybrid Tagging System to create both raw and canonical tags, which help surface granular patterns in conversations. This way, you can ensure that each ticket is classified correctly based on your business's specific language. Additionally, leverage the Evidence-Backed Traceability feature to link routing decisions back to the original ticket text, allowing you to validate and adjust your routing logic as needed.

What if my team struggles with misrouted tickets?

If misrouted tickets are a common issue, consider implementing confidence thresholds in your routing process. Use Revelir AI to set different thresholds based on the risk level of the intents. For example, high-risk intents may require a confidence score of 0.85 or higher before auto-routing. For those scoring below the threshold, route them to a fallback queue for manual review. This approach helps reduce misroutes and ensures that tickets are handled appropriately based on their urgency.

Can I analyze ticket data without manual tagging?

Yes, you can analyze ticket data without manual tagging by using Revelir AI's Full-Coverage Processing feature. This allows you to process 100% of your ingested tickets automatically, eliminating the need for upfront manual tagging. You can then use the Data Explorer to filter and inspect the structured data, including sentiment, churn risk, and custom metrics, providing you with a comprehensive view of your support conversations.

When should I update my routing taxonomy?

You should update your routing taxonomy when you notice patterns in misrouted tickets or when new types of customer inquiries emerge. Use the Canonical Governance Rule to guide your updates: only create or split an intent category if it changes the routing path, SLA, owner, or reporting action for at least 5% of the affected ticket volume. This helps maintain a clean and effective taxonomy that accurately reflects your customers' needs.