42% of support leaders say proving CX impact to product and exec teams is a major challenge, according to Gartner research on customer service analytics. If you spent time this week trying to explain a bad trend with a screenshot, a gut feeling, and three cherry-picked tickets, you felt the real problem already.

It’s usually not a data problem. It’s an evidence problem. Same thing with identifying key drivers of customer issues: most teams think they need a better dashboard, but what they really need is a way to connect the metric to the exact conversations behind it.

If you're trying to get past score-watching and actually understand what’s driving customer pain, Learn More.

Key Takeaways:

- Scores tell you that something changed. Drivers tell you why it changed.

- If you’re identifying key drivers of support issues from samples, you’re probably missing the pattern that matters most.

- A useful driver system needs three layers: granular signals, grouped themes, and ticket-level proof.

- 100% conversation coverage matters because rare but costly issues often hide outside your sample.

- Traceability changes the meeting dynamic. People stop debating anecdotes and start looking at evidence.

- The fastest path is usually to analyze support data where it already lives, then layer structure on top.

Why Identifying Key Drivers of Customer Issues Usually Breaks

Identifying key drivers of customer issues breaks when teams try to extract strategy from partial evidence. The issue isn’t lack of effort. It’s that support data lives in free text, while leadership wants neat, defensible answers.

The dashboard says “down.” Nobody knows why.

At 8:14 AM on Monday, a support director is in Google Slides pulling screenshots from Zendesk Explore before the weekly CX review. CSAT dipped 6 points, ticket volume jumped 18%, and she has maybe four tickets open in tabs that seem representative enough to defend the story. Product asks whether the issue is onboarding or billing. She can’t prove either one in the room, so the meeting turns into argument by confidence level. That’s usually the moment support loses the plot and some credibility with it.

That meeting falls apart for a simple reason: the chart isn’t connected to the cause. A score can tell you what happened. It can’t do the last mile of identifying key drivers of the issue unless the underlying conversations have been turned into something you can group, inspect, and validate. Otherwise, you’re doing executive theater with cleaner colors.

Manual review sounds responsible here. And fair enough, manual review does catch nuance. But once volume passes roughly 500 tickets a month, the math gets ugly fast. Even a 10% sample at 3 minutes per ticket is hours of labor for a partial view, and partial views create debate, not decisions.

Sampling feels disciplined. It usually creates false certainty.

Let’s pretend your team reviews 100 tickets out of 2,000. You find a cluster of login complaints, so that becomes the story for the month. Nobody’s checking the 1,900 tickets you didn’t read, where maybe the real spike was billing confusion among enterprise accounts or onboarding friction among a newly launched segment.

That’s the Sample Trap. I’d call it the 10-80-10 rule. The first 10% of tickets often confirms what people already believe. The middle 80% hides the real distribution. The last 10% contains the weird, costly edge cases that turn into churn or escalations later. If you’re identifying key drivers of customer issues from the first bucket, you’ll optimize for the loudest pattern, not the most important one.

There’s a case to be made for sampling when volume is tiny. If you’re handling 50 tickets a month, sure, you can read them all or close to it. That’s a fair exception. But once support becomes operationally meaningful, sampling stops being a shortcut and starts being a risk.

The human cost shows up before the reporting cost

What breaks first isn’t the dashboard. It’s trust. Support managers start writing cautious summaries in Slack because they know any bold claim will get challenged. Product learns to hear “we think” instead of “we know.” Finance stops treating support insights as operating evidence and starts treating them as anecdotes with branding.

There’s a surprisingly useful analogy here. Identifying key drivers of customer issues from partial ticket samples is like trying to diagnose a production outage from a single error log line. You might catch the symptom. You probably miss the dependency chain, the segment impact, and the thing that actually needs fixing. Support analysis works the same way: without full conversational coverage, root cause gets flattened into whatever story is easiest to repeat.

Worse, it trains the company to distrust support as a source of insight. That’s the real loss. If support can’t clearly show what’s breaking, who’s affected, and why it matters, then the next question becomes obvious: what would a better system for identifying key drivers of issues actually look like?

A Better System for Identifying Key Drivers of Support Problems

A better system for identifying key drivers of support problems turns raw conversations into structured evidence you can group, test, and trace back to the source. The goal isn’t more reporting. It’s a cleaner path from messy tickets to defensible decisions.

Start with the Driver Ladder, not the score

The first move is structural. You need a framework that connects conversation detail to leadership language. I use a simple one: the Driver Ladder.

At the bottom are raw signals. These are the exact issue fragments customers mention, things like refund request, billing fee confusion, or account lockout. In the middle are normalized categories that make reporting sane. At the top are drivers, broader themes like Billing, Onboarding, Performance, or Account Access. If you skip any layer, identifying key drivers of customer issues gets sloppy.

Why this works is pretty straightforward. Raw signals give you discoverability. Normalized categories give you consistency. Drivers give you something leadership can act on. Same thing with product reviews, roadmap debates, and retention analysis. Executives rarely need 200 tiny tags. They need a clear answer to what’s really driving the problem, plus enough evidence to trust it.

Before you build anything, run this quick diagnostic:

- Can you name your top five support drivers right now?

- Can you show the exact tickets behind each one in under five minutes?

- Can you separate symptom language from root-cause language?

- Can product see which driver is rising for which segment?

- Can you explain why a score moved without reading tickets live in the meeting?

If you answered no to three or more, your driver model isn’t mature enough yet. That’s not a moral failure. It’s just a signal that your current setup is describing noise, not structure.

Use the 70/20/10 rule for grouping issues

Contrast makes this easier to see: too few categories and everything becomes “bug,” too many and nobody can report cleanly. A better threshold is the 70/20/10 rule.

About 70% of tickets should roll into stable, recurring canonical categories. Around 20% should live in emerging raw patterns that you’re still watching. The last 10% should remain weird, rare, or one-off until they earn a category. If everything becomes a top-level driver, identifying key drivers of support issues turns into taxonomy theater.

Here’s a concrete example. Say customers keep mentioning “can’t reset password,” “magic link expired,” and “SSO loop.” Those are different raw signals. You may normalize them into a canonical tag like login access failure. Then you may roll that into the driver Account Access. Now you’ve got a system that preserves nuance without drowning the room in fragments.

I’ve seen teams resist this because they want a perfect taxonomy upfront. Fair concern. A sloppy taxonomy creates cleanup work later. But the better move is adaptive clarity: start with categories that explain at least 80% of recurring volume, then refine monthly. Taxonomies should mature like a chart of accounts in finance—stable enough to compare periods, flexible enough to absorb new reality.

Don’t stop at sentiment. Pair every driver with consequence

What gets funded isn’t the loudest issue. It’s the issue with an attached cost. That’s the shift.

This is where most identifying key drivers of customer issues projects stall out. They find themes, but they don’t connect those themes to business impact. A better rule is simple: if a driver shows up in more than 5% of tickets, pair it with at least one consequence metric. Sentiment is one. Churn risk is another. Customer effort matters too. If you can add an outcome layer, even better.

So instead of saying, “Billing is a top issue,” you say, “Billing confusion appears in 8% of tickets, spikes to 14% for new enterprise accounts, and carries 2x the negative sentiment rate of average support contacts.” That changes the room. Now you’re not reporting a category. You’re identifying key drivers of a measurable business problem.

Some teams will push back and say this sounds too analytical for support. That objection is fair because support teams already run hot, and adding consequence mapping does create another layer of work. Still, the point holds. Without the consequence layer, identifying key drivers of customer issues stays descriptive when it needs to become economic. One ticket gets attention. Fifty tickets plus a consequence trend gets action.

For a grounding source on why unstructured service data needs structured analysis, McKinsey has written about the value of using customer care data as a source of product and experience insight in a more systematic way here.

Make traceability a hard rule, not a nice-to-have

What happens when a product VP asks, “Can you show me the actual conversations?” That question is the audit, and a surprising number of CX readouts fail it.

Call this the Receipt Test. For every aggregate number, you should be able to answer three questions in under two minutes: which conversations produced this number, what quotes represent the pattern, and which customer segment is most affected? If you can’t do that, you’re not done identifying key drivers of. You’re still guessing with charts.

This matters more than people admit. Product leaders don’t reject support insight because they hate support. They reject it because black-box summaries are impossible to audit. Fair point. If a team is going to shift roadmap time, process change, or staffing based on your readout, they need to trust the path from transcript to metric.

The strongest teams build evidence chains, not just dashboards:

- driver

- segment affected

- consequence metric

- representative quotes

- ticket-level validation

That structure sounds almost boring. Good. Boring is what holds up in leadership reviews. So once you’ve got a driver system, consequence pairing, and traceability, the obvious question is how to make identifying key drivers of customer issues operational without building a giant spreadsheet machine.

How Revelir AI Makes Driver Analysis Defensible

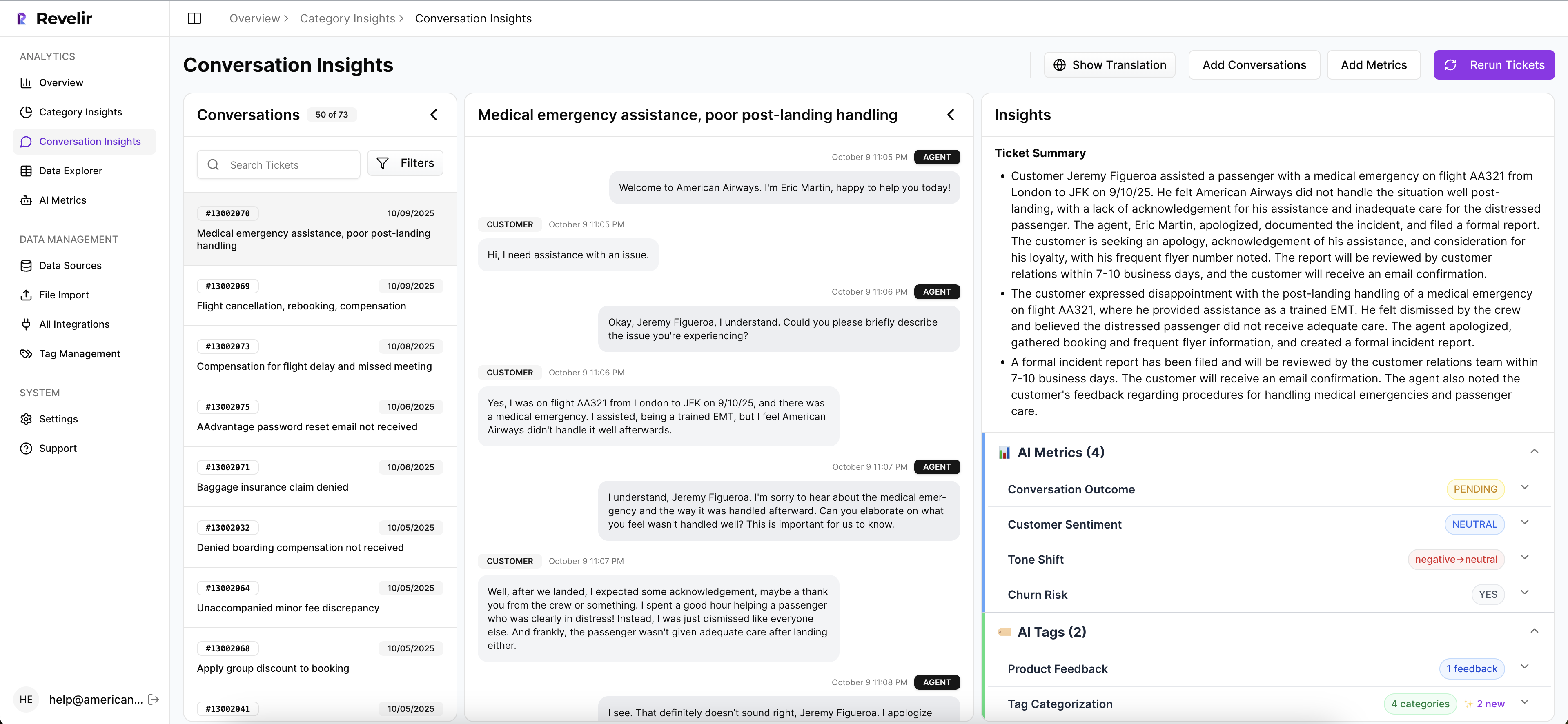

Revelir AI makes driver analysis defensible by turning support conversations into structured, traceable metrics you can inspect at both the pattern level and the ticket level. It doesn’t ask you to trust a black box. It gives you the metric, the grouping, and the underlying evidence.

Data Explorer gives you a working surface, not a static report

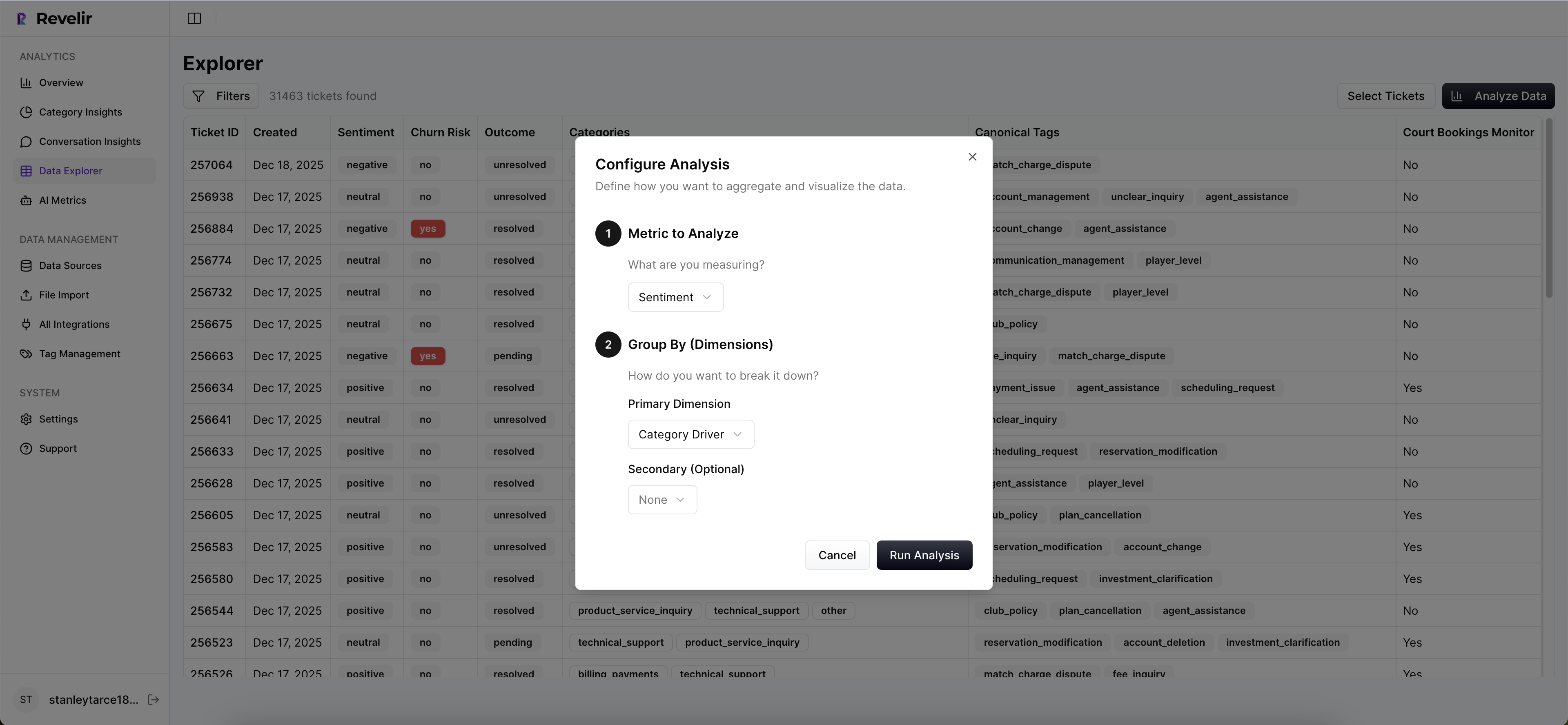

Static dashboards freeze the question too early. Revelir AI’s Data Explorer works more like a pivot table for support conversations, which matters because identifying key drivers of customer issues is almost never a one-chart exercise.

You can filter, group, sort, and inspect tickets with columns for sentiment, churn risk, effort, tags, drivers, and custom metrics. So instead of rebuilding a BI report every time somebody asks a sharper question, you can move from “negative sentiment rose” to a more focused view grouped by Driver, Canonical Tag, or other structured fields, then drill straight into the tickets behind it.

That’s the big shift. Instead of exporting, restructuring, and arguing, you can stay inside the evidence. Honestly, that’s where most teams get their time back.

Analyze Data and Drivers move you from what happened to why

Picture a support ops lead staring at one giant ticket table and trying to explain the month to product. That workflow dies fast. Revelir AI’s Analyze Data view groups metrics by Driver, Canonical Tag, or Raw Tag, so identifying key drivers of support issues becomes an analysis workflow instead of a sorting exercise.

Drivers are useful because they turn scattered ticket language into leadership-readable themes. The Hybrid Tagging System helps with that. AI-generated Raw Tags surface the specific issue signals in each conversation. Canonical Tags give you normalized reporting language. Users can map raw to canonical tags and refine the taxonomy over time, and Revelir AI learns from those mappings for future tickets.

That means your analysis can stay specific without becoming chaotic. You don’t have to choose between nuance and consistency. You get both, which is rare.

Evidence-backed traceability closes the trust gap

Can a metric survive a hostile meeting? That’s the real product test for support analytics.

Revelir AI links aggregate metrics back to the original conversations and quotes. With Conversation Insights, teams can drill into transcripts, review AI-generated summaries, inspect assigned tags and drivers, and validate the pattern before making the claim in a review.

That sounds small until you’ve lived the alternative. The alternative is somebody asking, “Can you show me the actual tickets?” and the room going quiet while someone digs through exports. Revelir AI changes that dynamic. The proof is already attached.

There’s also a practical advantage if your data is already sitting in existing systems. Revelir AI can ingest support data through Zendesk Integration or CSV Ingestion, process 100% of those conversations, and then let teams export structured metrics through API Export if they need the data elsewhere. So you don’t need to rip out your helpdesk to start identifying key drivers of customer issues in a more defensible way.

What Better CX Decisions Look Like From Here

Better CX decisions come from a simple shift: stop treating scores as the answer and start treating them as the prompt. The real work is identifying key drivers of what customers are actually telling you, then tying those drivers to evidence strong enough to survive a tough meeting.

It’s usually the same story. The teams that move fastest aren’t the ones with the prettiest dashboards. They’re the ones that can say what’s breaking, who it’s hurting, and show the ticket trail behind the claim. That’s a different standard.

If that’s the direction you want, Get started with Revelir AI (Webflow).

Frequently Asked Questions

How do I analyze customer support tickets for churn risk?

You can use Revelir AI's Data Explorer to analyze customer support tickets for churn risk. Start by filtering your tickets to focus on those with high churn risk scores. Then, group these tickets by drivers or canonical tags to identify common themes. This will help you see which issues are most likely leading to churn. Finally, drill down into specific tickets to review the conversations and gather insights on customer pain points.

What if I want to track emerging support issues?

To track emerging support issues, utilize Revelir AI's Hybrid Tagging System. This system generates raw tags that surface granular themes from support conversations. You can then map these raw tags to canonical tags for clearer reporting. Regularly review the emerging patterns and adjust your tagging as necessary to ensure you're capturing new issues as they arise.

Can I connect Revelir AI to my existing helpdesk system?

Yes, you can connect Revelir AI to your existing helpdesk system, such as Zendesk. This integration allows Revelir to ingest historical and ongoing support tickets automatically. By doing this, you ensure that your analysis is always up-to-date and covers 100% of your conversations, eliminating the need for manual exports and reducing the risk of missing critical insights.

How do I ensure all support conversations are analyzed?

To ensure all support conversations are analyzed, use Revelir AI's full-coverage processing feature. This capability processes 100% of ingested tickets without relying on sampling. By doing so, you eliminate blind spots and can confidently identify key drivers of customer issues based on complete data, rather than a small subset.

When should I use the Analyze Data feature?

You should use the Analyze Data feature when you want to summarize metrics related to customer support issues. This tool allows you to group data by drivers, canonical tags, or raw tags, providing a clearer view of the underlying issues. It’s particularly useful during team meetings or reviews when you need to present structured insights backed by data.