Most teams say they trust their AI. Then a VP asks “show me the proof,” and the room goes quiet. Building trust in AI is not about slogans, it is about evidence you can trace, coverage you can defend, and signals that hold up when budgets and roadmaps are on the line.

It is usually not the model that breaks trust. It is the process around it. Sampling. Black‑box scores. No way to click into the exact ticket that drove the chart. Same thing with CX and product reviews, if nobody’s checking the source, the numbers feel optional.

Ready to get started? Learn More.

Key Takeaways:

- Trust in AI is earned through three things: coverage, clarity, and traceability.

- Sampling creates false certainty; 100% conversation coverage removes debate.

- Scores without drivers stall decisions; measure “why,” not just “what.”

- Black‑box outputs erode confidence; link every metric to source tickets and quotes.

- A practical playbook: define trust criteria, capture all conversations, use hybrid tagging, enforce traceability, analyze by drivers.

- Governance matters; decision records and regular reviews keep your AI honest.

- Tools that compute metrics on every ticket and link to quotes make this easy to sustain.

Why AI Fails in CX Without Evidence You Can Trust

AI in CX fails when leaders cannot see proof behind the numbers. Trust collapses when coverage is partial, metrics are opaque, and no one can drill into the exact tickets that drove a spike. A single tough question in a product review exposes the gap and stalls decisions.

Most teams think they have enough signal because they see trends and a few examples. The problem is not lack of data, it is blind spots and untraceable summaries. When you rely on samples or black‑box tags, you risk picking the wrong priority, wasting a sprint, and losing credibility across functions. Honestly, that feeling when someone asks “show me where you saw that” and you cannot click into it is brutal.

Sampling Creates False Certainty

Sampling looks efficient until it misses the pattern that matters. A ten percent review can feel representative, but live operations are lumpy, and angry customers are not evenly distributed. High‑value segments may be underrepresented, new product cohorts may be spiking, and your sample can quietly skip both. You walk into a review with a clean chart and leave with a debate about method, not a decision.

Coverage changes the conversation. When you analyze every ticket, you can pivot by cohort, plan, region, or release window without hedging. You do not argue about representativeness, you show it. Leaders do not want perfect precision, they want confidence that you did not miss the thing that hurts revenue next month. In my experience, coverage is the line between trust and noise.

Scores Without Drivers Stall Decisions

A downward CSAT or negative sentiment trend signals a problem, not a plan. Scores alone do not say whether onboarding steps confuse new users, whether billing language is wrong, or whether a key integration is failing. You cannot prioritize work with a direction arrow. You need drivers that answer why the score moved at all.

Driver analysis turns vague concern into action. Group issues under themes that map to how your company works, then quantify where effort spikes or where churn risk clusters. A product leader can trade off one release item for another when the “why” is quantified. Without the “why,” you burn cycles, you lose speed.

Black‑Box AI Breaks Trust in the Room

Opaque models break down exactly when stakes rise. If outputs cannot be traced to source text, the conversation shifts from “what should we fix” to “can we trust this.” No one wants to bet a quarter on a number they cannot verify. You feel it as soon as eyes shift from charts to faces.

Traceability fixes that. Every aggregate must link to the exact tickets and quotes behind it so stakeholders can click once, validate, and move on. The mood in the room changes when verification takes seconds, not days. You stop defending your method and start aligning on work.

A Practical Playbook for Building Trust in AI Across Support

Building trust in AI for support conversations means you define what good looks like, you measure all of your data, and you keep a clean line from metric to moment. The work is not glamorous, but it is the difference between fast decisions and slow arguments. Let’s pretend you are starting from scratch and want this to hold up in leadership tomorrow.

Define What “Trust” Means for Your Organization

Trust needs a checklist, not a vibe. Start by writing down the dimensions that matter for your context, because teams over‑optimize one and quietly ignore the rest. Accuracy matters, but so do coverage, traceability, latency, and fairness. You cannot defend an insight if one of those is broken.

In my experience, five dimensions anchor most CX and product use cases. Accuracy is the rate at which your tags and metrics reflect the real issue. Coverage is the percent of conversations analyzed, not just ticket count but the cohorts that matter. Traceability is your ability to click from a chart to a quote in seconds. Latency is how fast new tickets become reliable metrics. Fairness is whether your system treats cohorts consistently. Define targets for each, then agree on what passes in a review, especially when evaluating building trust in ai.

You can borrow from public frameworks to ground this conversation. The NIST AI Risk Management Framework lays out trustworthy AI characteristics in plain terms, and the European Parliament’s summary of the AI Act shows where regulation is headed for transparency and accountability. Standards are not red tape here, they are shared language that reduces debate later.

If you want a formal anchor for operations, the ISO/IEC 42001 AI management system standard offers a governance baseline. You do not need certification to benefit. Use it to sanity‑check your processes and to spot gaps before an exec or auditor does. A little structure avoids costly mistakes.

Insist on 100% Conversation Coverage

Partial views create the worst kind of confidence, the kind that looks right until it fails. Coverage is not a nice‑to‑have, it is the foundation of trust in AI for CX. If you only measure a slice, you will miss the spikes that drive churn risk and you will waste time debating samples. That is avoidable.

Make a simple rule: every ticket gets processed, automatically. Start with your main helpdesk, then backfill with CSVs if you need a historical baseline. Set ingestion so new tickets flow in daily, then validate the pipeline before anyone looks at a chart. The goal is not complexity. The goal is no blind spots.

Coverage is also a contract with your stakeholders. When a product manager asks for a cut by plan and release window, you can do it. When an exec asks about enterprise vs SMB, you can answer quickly. You are no longer guessing, you are measuring. Frankly, that shift calms the room.

Adopt a Hybrid Tagging System That Learns

Generic tags miss nuance. Human‑only tags break at volume. The fix is a hybrid system. Let AI assign granular raw tags that surface emerging themes, then map those to a human‑aligned canonical taxonomy that matches your business language. Raw for discovery, canonical for reporting. Both matter.

Start with a lightweight taxonomy built from how you already talk about your product, then refine as patterns emerge. Merge duplicates, retire stale categories, and document decisions so your mappings stay coherent. Over time, the system learns those mappings and applies them to new tickets automatically. Your reporting gets clearer, not noisier, as volume grows.

One more thing here: make sure your team can see both layers. Analysts need raw granularity to find signals. Executives need canonical clarity to make calls. When both are present, you reduce friction and gain speed. Everyone knows what the words mean.

Make Every Metric Traceable to a Ticket

Trust collapses when a chart has no receipts. Every aggregate number should link to the exact tickets and quotes that created it. If a dashboard says “Billing friction drove negative sentiment for enterprise,” you should be two clicks from three representative transcripts. People believe what they can verify.

Build a habit around decision records. When you prioritize a fix, record the driver, the cohorts affected, the metrics that matter, and the quotes that capture the pattern. Keep it short. The point is not bureaucracy. The point is a trail you can share with finance, product, and leadership without rewriting your story every week.

What to include in a decision record:

- Driver and canonical tag behind the issue, with short rationale

- Cohorts most affected, with simple cuts like plan or segment

- Metrics that moved, with precise definitions

- Two to three quotes that represent the pattern

- Owner and date, plus expected impact window

Measure Drivers, Not Just Scores

Scores show direction, drivers show cause. If you are not grouping conversations under drivers like Billing, Onboarding, Account Access, or Performance, you are forcing leaders to guess what to fix. Driver analysis removes the guesswork and keeps debates grounded in what actually changed, especially when evaluating building trust in ai.

Design your driver set to reflect how your company makes decisions. Too broad and you learn nothing. Too narrow and you drown in categories. Start with five to eight, then use raw tags to roll up emerging themes into the right buckets. Review monthly. Everyone should agree on meanings, so your charts are read the same way in every room.

Do not stop at sentiment. Track customer effort where your data supports it, flag churn risk, and capture outcomes like resolved or pending. The mix tells a better story than any single metric. You will catch issues earlier and you will defend your calls with less friction.

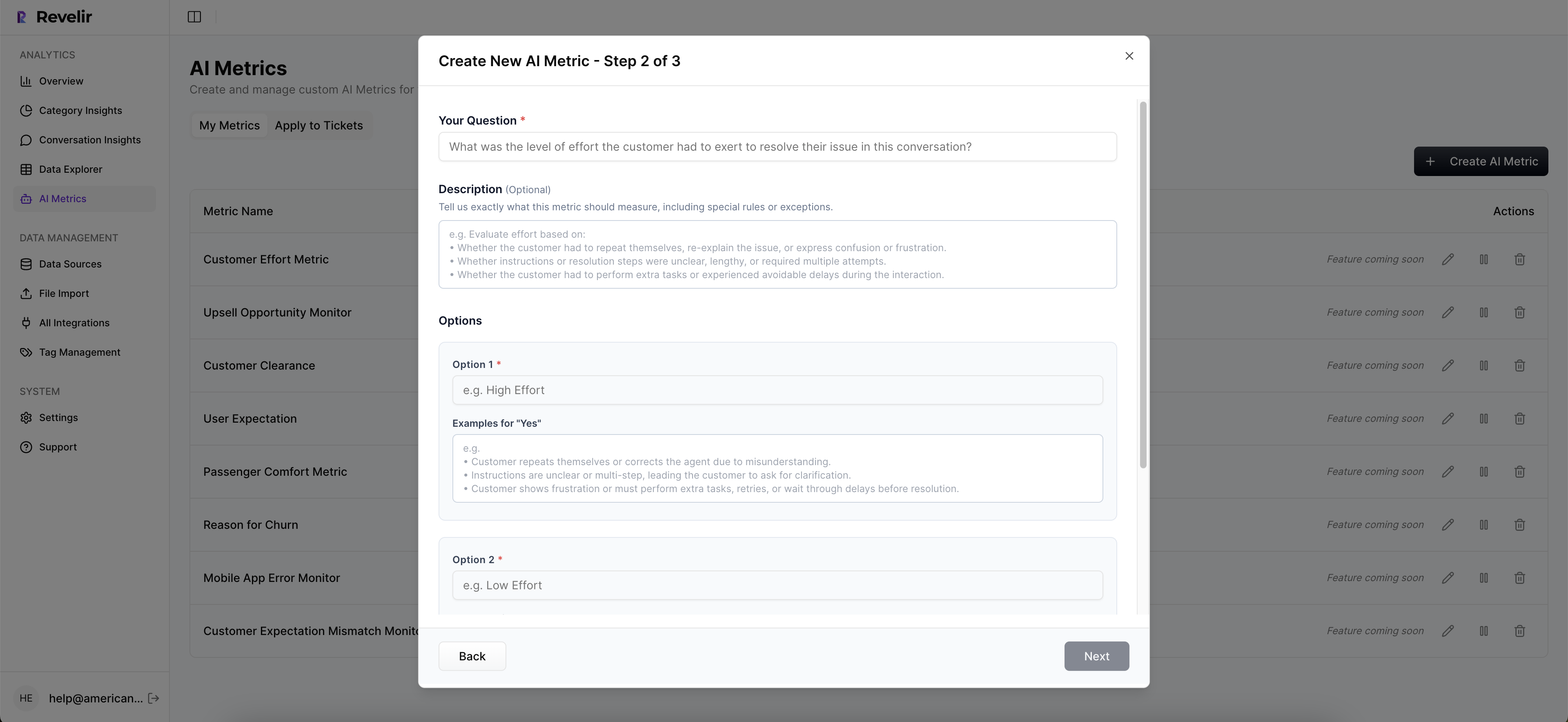

How Revelir Makes Trusted AI Evidence Routine for CX for Building trust in ai

Revelir makes building trust in AI practical by measuring 100% of your support conversations and tying every aggregate metric to the exact tickets and quotes behind it. CX and product teams can filter, group, and drill down in seconds, then walk into reviews with proof instead of anecdotes.

Full‑Coverage Metrics With Traceable Proof

Revelir processes every ingested ticket with no sampling and computes core signals like Sentiment, Churn Risk, Customer Effort when supported, and Conversation Outcome. Those metrics are not trapped in a black box, because every chart links directly to the underlying conversations. You can click into a ticket, read the AI‑generated summary, and copy a representative quote for your deck without leaving the analysis flow.

Raw Tags and Canonical Tags work together so discovery and reporting stay aligned. Analysts see granular, emerging themes, while leaders get categories that match how the business talks. Drivers sit above both, so you can answer the “why” in leadership‑friendly terms without reworking your data. Conversation Insights gives you ticket‑level drill‑downs when you need to validate a pattern fast, which means less risk of misreading the signal and less cost from chasing the wrong fix.

From Exploration to Action in Minutes

Data Explorer is a pivot‑table‑like workspace where you filter, group, sort, and inspect every ticket with columns for sentiment, churn risk, effort, tags, drivers, and custom metrics. Analyze Data runs grouped aggregations by Driver, Canonical Tag, or Raw Tag, then links results back to tickets for fast validation. Zendesk Integration keeps your dataset fresh, CSV Ingestion covers pilots and backfills, and API Export moves structured metrics into your BI if you need to share more widely.

Custom AI Metrics let you encode your business language directly, so you can track signals like Upsell Opportunity or Reason for Churn alongside core fields. Evidence‑Backed Traceability means every aggregate is verifiable, which changes how cross‑functional meetings feel. In my experience, the ability to show the exact quote behind a bar in a chart ends debates in under a minute. Revelir takes you there quickly and consistently.

Ready to see it in action? See how Revelir AI works

Stop debating anecdotes. Start making evidence‑backed decisions your execs can trust. Learn More

What Building Trust in AI Looks Like Next Quarter

Building trust in AI pays off when your reviews shift from “can we trust this” to “what should we fix first.” You get coverage you can defend, clarity on why scores move, and traceability that speeds alignment. You stop wasting cycles on method debates and start reducing churn risk faster.

Make the playbook real. Define trust, process every conversation, keep hybrid tags clean, and require a click path from chart to quote. Use drivers to explain why something changed, not just that it changed. The result is simple, leaders ask fewer meta‑questions and make decisions sooner.

If you want the easy path, use a system that already measures 100% of conversations, computes AI metrics, and links every insight to the source tickets. That is how trust becomes routine, not a special project.

Frequently Asked Questions

How do I ensure 100% conversation coverage with Revelir AI?

To achieve 100% conversation coverage with Revelir AI, start by integrating your helpdesk system, like Zendesk, to automatically ingest all support tickets. This setup ensures that every conversation is processed without manual tagging. Additionally, you can use CSV ingestion for historical data to backfill any gaps. Once set up, validate that new tickets flow in daily, allowing you to analyze all conversations without missing critical insights.

What if I need to analyze specific customer segments?

You can analyze specific customer segments by using the Data Explorer feature in Revelir AI. Filter your dataset based on criteria such as customer type or product cohort. This allows you to drill down into the metrics that matter most for that segment. You can also create custom AI metrics to track specific signals relevant to those segments, ensuring your analysis is tailored and actionable.

Can I track customer effort alongside sentiment?

Yes, you can track customer effort alongside sentiment using Revelir AI's AI Metrics Engine. This feature automatically computes metrics like Customer Effort and Sentiment for each conversation. You can view these metrics in the Data Explorer, allowing you to analyze how customer effort correlates with sentiment trends. This dual tracking helps you identify areas needing improvement and enhances your understanding of customer experiences.

When should I use hybrid tagging in Revelir AI?

You should use hybrid tagging in Revelir AI when you want to capture both granular details and high-level categories in your support conversations. Start by allowing the AI to generate Raw Tags for specific issues, then map these to Canonical Tags that reflect your organization’s language. This approach ensures that your tagging system is both comprehensive and aligned with how your team communicates, making reporting and analysis clearer.

Why does traceability matter in AI metrics?

Traceability is crucial in AI metrics because it allows you to link every aggregate number back to the source conversations and quotes. This transparency builds trust with stakeholders, as they can verify the data behind your insights quickly. In Revelir AI, every metric is designed to be traceable, enabling you to validate patterns and support your findings with concrete evidence, which is essential for effective decision-making.