Most KB programs try to be smart about tickets, then ship the wrong thing. It’s usually one of two mistakes. Either you ignore AI signals from conversations and wait on gut, or you auto-update knowledge base from whatever the model spits out and flood the KB with noise. Both fail. One never moves the needle on repeat tickets. The other erodes trust.

Let’s pretend you want faster fixes without guesswork. The path isn’t “more reviews” or “more AI.” It’s evidence-backed, ticket-driven content with guardrails. You measure 100 percent of conversations, turn signals into clear topics, and publish only the pieces that meet a threshold for volume, severity, and impact. Nobody’s checking that today. That’s the gap.

Key Takeaways:

- Set signal thresholds that earn a KB slot, combining volume, severity, and churn or effort risk.

- Map raw AI tags to canonical KB topics so you don’t create near-duplicate articles that confuse search.

- Pull quotes, screenshots, and steps straight from tickets, then generate drafts that carry evidence, not fluff.

- Run a human-in-the-loop validation pass with a staged rollout, monitors, and rollback rules to protect quality.

- Track repeat-ticket volume for fixed issues and time-to-publish, then tune thresholds weekly.

- Aim to cut time-to-publish from about 14 days to under 48 hours and reduce repeat volume 15 to 25 percent in eight weeks.

Why Auto-Update Knowledge Base From Ticket Signals Beats Manual Reviews

Publishing from ticket signals works when you treat conversations as data, not anecdotes. You get clear patterns, quantifiable impact, and evidence you can show in a product review. Manual reviews, by contrast, miss coverage and invite bias. Same thing with score-watching, it tells you the “what,” never the “why.”

The Two Failing Patterns You See Everywhere

Most teams default to either stalling or spraying. Stalling looks like weekly triage where the loudest story wins, then nothing ships for two weeks. Spraying is worse. You set an AI task, ship three drafts a day, and wake up to a messy KB where search returns five similar answers. Both are expensive.

The fix isn’t extreme. It’s discipline. Define when a topic earns a page, prove the root cause with ticket evidence, and publish one canonical answer that resolves the real problem. No more “maybe helpful” content. No more internal debates about which example to believe. The KB becomes a source of truth, not a scrapbook.

What “Evidence-Backed” Really Means For A KB

Evidence-backed means every KB decision points back to the same thing, the exact tickets and quotes that led you there. You can show the pattern, not just claim it. You can also defend a rollback when an article underperforms after release.

In practice, it looks like three tests:

- Clear driver: the topic rolls up to a driver like Billing or Account Access, not a vague bucket.

- Repeatability: quotes show the same failure across customers and time windows.

- Fixable scope: the article teaches a resolution users can execute, not a support-only workaround.

You Can’t Auto-Update Knowledge Base From Scores Alone

Scores don’t tell you which article to write or how to write it. You need drivers, tags, and quotes to reveal the root cause and the right fix. Without that, you risk new pages that look right but fail to reduce repeats. That’s the hidden cost of score-only thinking.

Scores Tell You “What,” Drivers Tell You “Why”

A dip in CSAT is a fire alarm. Helpful, but not a diagnosis. Drivers and canonical tags are the diagnosis. They show whether new users struggle in setup, whether billing language confuses upgrades, or whether performance issues spike on a new release. Industry research keeps repeating it, driver-level insight wins decisions, not raw scores. For context, see Gartner’s analysis on driver-based CX decisioning in customer service leadership reports (Gartner CSS hub).

When you map raw AI tags to a canonical taxonomy, you stop arguing anecdotes and start comparing cohorts. Enterprise vs SMB. New vs returning. Web vs mobile. That’s where the real “why” shows up. And that’s where your KB actually earns its keep.

Coverage Beats Sampling Every Single Time

Sampling invites mistakes. You’ll miss the quiet but costly pattern, then overreact to a one-off rant from a VIP. With 100 percent coverage, you see outliers, but you also see the base rate. You can spot an issue early, then decide if it’s a KB fix, a product issue, or both.

Zendesk’s CX Trends reports have called out the shift to conversation data and automation at scale, which aligns with this approach (Zendesk CX Trends). The goal isn’t “more dashboards.” The goal is traceable signals that move work. Fast.

The Cost Of Waiting: Repeat Tickets, Lost Trust, And Stale Articles

Delay has a price. Every day you wait, you pay in repeat tickets, agent time, and customer frustration. The common pattern we see, about 14 days from first signal to live KB update. During that window, repeats pile up, and the KB falls out of sync. That’s a real cost.

Where The Days Disappear

Time vanishes in handoffs. Research to brief. Brief to draft. Draft to approval. Approval to publish. Each hop looks harmless, an hour here, a day there. Add meetings and “quick reviews,” and a week is gone. Add stakeholder edits after a late surprise, and two weeks disappear.

You can compress it if you kill the guesswork. Let signals pick the topic. Let evidence pre-fill the outline. Let the draft inherit quotes and steps that already worked in real tickets. Then reviewers act as validators, not co-authors. That shrinks cycle time without risking quality.

Here’s the usual drag, in plain terms:

- Topic debates that ignore drivers and over-index on anecdotes.

- Drafts that start from a blank page and wander, especially when evaluating auto-update knowledge base from.

- Approvals with no acceptance criteria, so comments spiral.

- Publishing blocked on “ownership” questions that nobody’s tracking.

Quality Debt Shows Up In Meetings

Quality debt isn’t abstract. It shows up when a PM asks, “Where did this number come from?” and the room goes quiet. Or when leadership skims the KB and finds four versions of the same fix. Trust slips. Next time you bring a KB plan, you get more scrutiny, not less.

Evidence fixes this. Show the driver trend, show the quotes, show the reduction in repeats after launch. Tie it to cost. Then the conversation shifts from “Do we believe you?” to “What’s next?” That’s the goal.

What It Feels Like When The KB Lags Behind Reality for Auto-update knowledge base from

You can hear the lag in the queue. Agents paste the same workaround, again and again. Customers get three different answers from three pages. You feel the waste, you watch backlog rise, and you start to doubt your own KB search. It’s draining.

You Can Hear The Friction In The Tickets

Read ten threads on a broken sign-in flow. The emotion is right there. Confusion. Then anger. Then churn threat. If you wait for “enough” examples by gut, you’ll miss the pattern until it’s loud. And loud is late. Raw tags and drivers catch it earlier. That’s the relief.

It’s usually the same three articles too. Out of date. Near-duplicates. Missing a key step. When you fix them with proof and see repeat tickets drop, the floor gets a little quieter. That’s not magic. That’s a clean pipeline doing its job.

The Approval Spiral No One Owns

Approvals get blamed, but the spiral starts earlier. Vague briefs. Loose acceptance criteria. Endless “what about” comments. Then a last-minute stakeholder adds a “small” change, and your publish date slips. Nobody’s checking the real rule, did we meet the evidence threshold to ship?

Tighten the loop. One owner. Clear thresholds. Drafts seeded with quotes and steps pulled from tickets that already solved the problem. Give approvers a checklist. If it passes, publish. If it misses, fix the gaps. Simple beats heroic.

How To Auto-Update Knowledge Base From Support Conversations Without Wrecking Quality

You can auto-update knowledge base from support conversations without wrecking trust by enforcing thresholds, mapping tags to canonical topics, generating evidence-backed drafts, and running a tight validation-and-rollback loop. That replaces guesswork with signals and protects quality while you speed up. Do that, and repeat tickets fall.

Define Signal Thresholds That Earn A Slot

Start by making publishing criteria binary. No hunches. A topic earns a KB update when it crosses your threshold for volume, severity, and impact on churn or effort. The formula doesn’t need to be perfect on day one, it needs to be explicit and auditable.

Set a practical rule set like:

- Volume: at least X tickets in Y days with the same raw tag pattern.

- Severity: high-effort or negative-sentiment skew above a set ratio.

- Impact: segment-adjusted, for example, VIP or new-user concentration.

Run it weekly. Adjust the numbers as you learn. The point is to end the “should we write this?” debate and move straight to “how do we fix it well?”

Map Raw AI Tags To Canonical KB Topics

Raw tags are great at surfacing real language and edge cases. Canonical tags make that mess reportable. If you publish from raw tags alone, you’ll create duplicates. If you only use canonical tags, you’ll miss nuance. You need both, mapped thoughtfully.

Work the mapping as a living system. Merge sibling tags, retire unused ones, and align names with how customers search. The payoff shows up in two places. Search results stop returning four similar pages. And your analytics stop lying by scattering the same issue across five labels.

Generate Drafts That Carry Evidence

Drafts should inherit the proof. Pull representative quotes that show the problem and the exact wording customers use. Pull the steps agents took that actually worked. Pull any screenshots or error strings users cite. Then use a prompt pattern that insists on structure, clarity, and source callouts, especially when evaluating auto-update knowledge base from.

A simple pattern works:

- Problem summary in the customer’s words, with one quote.

- Clear prerequisites and version or plan context.

- Step-by-step resolution, based on successful ticket steps.

- Validation step the user can run to confirm it’s fixed.

- Link to product docs if needed, not as a crutch, as backup.

Validate, Roll Out, And Be Ready To Roll Back

Protect quality with a light but firm gate. Give approvers a short checklist. Does it meet the threshold? Are quotes accurate? Are steps correct on a clean test account? Is the topic mapped to one canonical page, not five?

Then ship in stages:

- Publish internally and gather agent feedback for 24 to 48 hours.

- Publish externally to a percentage of users if your stack allows it, or ship broadly with tight monitoring.

- Track repeat-ticket volume for the tagged issue and bounce rate on the page in the first week.

- If the metrics go the wrong way, roll back and fix. No drama. Just rules.

Ready to see the whole workflow in action, from signal to published fix in under two days? See how Revelir AI works

How Revelir Helps You Auto-Update Knowledge Base From Support Signals You Trust

Revelir helps you auto-update knowledge base from conversations by turning every ticket into structured, traceable metrics you can act on. You see drivers, tags, and effort or churn risk across 100 percent of your data, then jump to the exact quotes behind each trend. That’s how you move fast without gambling on quality.

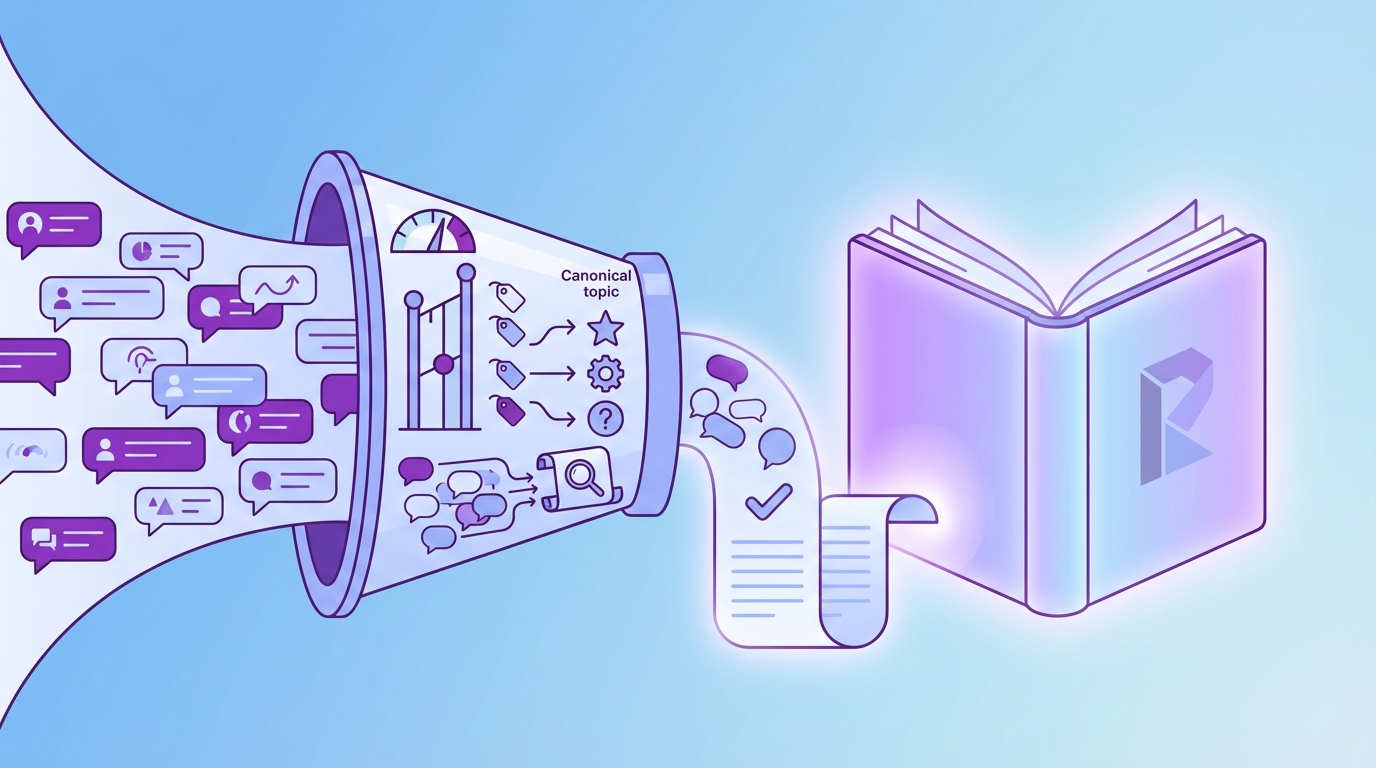

From Signals To Topics With Hybrid Tagging

Revelir’s Hybrid Tagging System pairs AI-generated raw tags with human-aligned canonical tags. Raw tags surface the gritty, real-world phrasing customers use. Canonical tags keep reporting clean and prevent topic sprawl. Map once, and Revelir learns the rollups for future tickets.

Drivers sit on top, so you can answer the only question leadership really cares about, why this issue matters now. With Drivers in place, you can spot that Account Access problems spike for new users this month, then decide whether the fix is product, KB, or both. One click later, you are inside the representative tickets to validate.

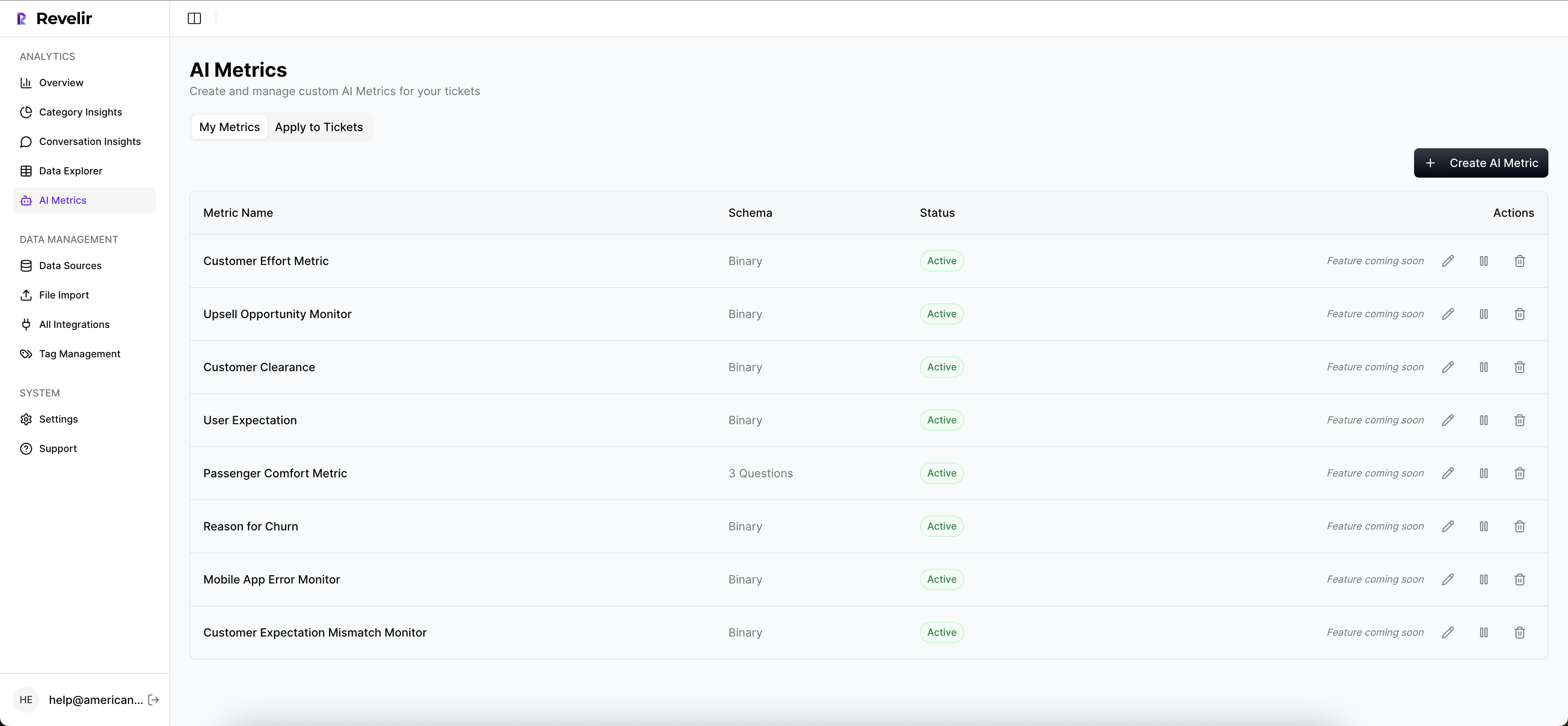

Quantify “Why” With AI And Custom Metrics

Out of the box, the AI Metrics Engine computes sentiment, churn risk, effort, and conversation outcome as structured fields. That means your thresholds can be data-backed from day one. When you need domain nuance, Custom AI Metrics let you classify things like Upsell Opportunity or Reason for Churn in your own language.

It’s the difference between “people are upset” and “negative sentiment with high effort among VIPs doubled this week on billing upgrades.” The first invites debate. The second drives action.

Prove It And Ship Faster With Traceability And Analysis

Evidence-Backed Traceability links every number to the tickets and quotes behind it. Conversation Insights gives you the full thread plus AI-generated summaries for speed. When you need to pivot from a list of candidates to a short, shippable set, Data Explorer and Analyze Data make it feel like a pivot table for support.

You filter, group, sort, and shortlist in minutes, not days. Then you pass a draft seeded with exact quotes and successful steps to a human validator. That is how you compress cycles without cutting corners.

Teams that operationalize this pattern see repeat-ticket volume on fixed issues drop quickly, often 15 to 25 percent within the first two months. Want to replicate that with your data and stack? Learn More

Key capabilities that make the workflow practical:

- Data Explorer for ad-hoc slicing and ticket drill-down, so you can move from pattern to proof on one screen.

- Analyze Data for grouped views by driver, tag, or segment, with links back to the underlying tickets.

- Full-Coverage Processing so you never debate sample bias when setting thresholds or declaring success.

- Zendesk Integration and CSV Ingestion for fast onboarding without a helpdesk migration.

- API Export to bring structured metrics into your BI once the analysis is done in Revelir.

Conclusion

Auto-update knowledge base from conversations the right way, and you stop arguing anecdotes. You publish the one canonical fix that earns its place. You protect quality with proof. More important, you cut time-to-publish from about 14 days to under 48 hours and reduce repeat volume on fixed issues by 15 to 25 percent in eight weeks.

If you are ready to trade sampling and guesswork for evidence and speed, start with thresholds, mapping, and evidence-backed drafts. Then let a lightweight validation and rollback loop protect the trust you worked hard to build. Ready to make that real with your own tickets and stack? Get started with Revelir AI (Webflow)

Frequently Asked Questions

How do I set signal thresholds for my knowledge base?

To set effective signal thresholds, start by defining clear criteria based on ticket volume, severity, and potential impact on churn. For example, you might decide that a topic earns a slot in the knowledge base if it receives at least 10 tickets in a week with a high-effort sentiment. Regularly review and adjust these thresholds based on your team's learning and the data trends you observe. Using Revelir's AI Metrics Engine can help you automatically compute these signals, making it easier to establish and refine your thresholds.

What if I notice duplicate articles in my knowledge base?

If you find duplicate articles, it’s crucial to map raw AI tags to canonical topics to prevent confusion. Start by reviewing the existing articles and identifying those that cover similar content. Use Revelir’s Hybrid Tagging System to merge similar raw tags into one canonical tag, ensuring clarity and consistency. This way, you can maintain a clean knowledge base that provides users with a single, authoritative answer to their questions.

Can I use Revelir to analyze ticket sentiment over time?

Yes, you can use Revelir to analyze ticket sentiment over time by leveraging the Data Explorer feature. This tool allows you to filter and group tickets based on sentiment metrics, helping you identify trends and patterns. You can examine how sentiment changes across different time periods or customer segments, which can inform your strategies for improving customer experience and addressing recurring issues.

When should I consider rolling back a published article?

Consider rolling back a published article if you notice an increase in repeat tickets related to that topic or if user feedback indicates confusion. Revelir's Evidence-Backed Traceability feature allows you to track the performance of articles by linking metrics directly to the source conversations. If the metrics indicate that the article is underperforming or causing more issues, you can roll it back and revise it based on the insights gathered.

Why does my knowledge base need to be evidence-backed?

An evidence-backed knowledge base is essential because it ensures that every article is rooted in actual customer interactions and data. This approach helps eliminate guesswork and anecdotal evidence, providing clear patterns and insights that can be trusted. Using Revelir, you can pull quotes and data from support conversations, ensuring that your knowledge base reflects real customer needs and issues, which ultimately enhances trust and usability.